Your survey isn’t too long. It’s too irrelevant.

That’s the real reason people abandon it halfway through. They hit a question that has nothing to do with their situation, and either guess an answer to move forward or close the tab entirely.

Both outcomes hurt you: one corrupts your data, the other shrinks your sample.

Skip logic is the fix. And it’s simpler to set up than most people think.

In this guide, you will learn:

- What skip logic in surveys actually means and how it works

- How it differs from branching logic, and when you need each one

- A 6-step process to design and test a branching survey from scratch

- The most common mistakes practitioners make and how to avoid them

Let’s start with it!

What Is Skip Logic?

Skip logic (also called branch logic, conditional branching, or question routing) is a survey design feature that automatically directs respondents to the next relevant question based on their previous answer. If their answer makes certain questions irrelevant, those questions are skipped entirely. The result is a shorter, more focused survey that feels like a conversation instead of a questionnaire.

Skip logic in surveys is a conditional rule that routes respondents to different questions based on their previous answer, ensuring they only see questions relevant to their situation. It is also referred to as branching logic, conditional logic, branch logic, or survey routing.

Every respondent is different. They have different roles, different product experiences, and different purchase histories. A flat survey that treats all of them the same collects noise, not insight. Skip logic solves this by building a personalized path through the same survey for each respondent, without you needing to create multiple separate surveys.

If you want to try it before reading further, tools like Qualaroo let you build branching surveys for free, directly inside your website or product, no code required.

How to Design a Skip Logic Survey in 6 Steps

Before diving into examples and use cases, here is the complete build process. Following these steps in order is what separates surveys that work from branching structures that silently break after launch.

| Step | What You Do | Why It Matters |

|---|---|---|

| 1 | Define your research objective | Stops you from adding questions that have no purpose |

| 2 | Choose your survey questions | Ensures every question earns its place before logic is applied |

| 3 | Map out all branching routes | Reveals dead ends and conflicting paths before you build anything |

| 4 | Keep the survey linear | Prevents infinite loops that trap respondents and corrupt data |

| 5 | Design the survey in a no-code tool | Translates your map into a working branching structure |

| 6 | Test every path before publishing | Catches broken routes your respondents would otherwise hit first |

Step 1: Define Your Research Objective

Start with the outcome, not the questions. What specific decision will this survey inform? What behavior are you trying to explain? Your survey should exist to reduce uncertainty around a clearly defined problem, not to collect general feedback.

Example: SaaS onboarding drop-off

If your data shows that users abandon the onboarding flow at a specific step, your objective is not to “understand user experience.” It is to identify why users fail to complete onboarding.

Your survey should reflect that focus:

- “Did you complete the signup process?” (Yes / No)

- If No →

- “What prevented you from completing the signup?”

- Too many steps

- Instructions were unclear

- Asked for too much information

- Technical issue

- Just exploring

- “What prevented you from completing the signup?”

Questions about pricing, features, or brand perception do not belong here. They do not serve the objective.

Example: E-commerce checkout abandonment

If users are dropping off at checkout, the objective is to understand what prevents them from completing the purchase.

Relevant questions:

- “Did you complete your purchase today?” (Yes / No)

- If No →

- “What stopped you from completing your purchase?”

- Shipping costs were too high

- Payment issues

- The delivery time was too long

- The checkout process was too complicated

- Not ready to buy

- “What stopped you from completing your purchase?”

Every question should map directly to a decision, such as simplifying checkout, adjusting pricing visibility, or fixing technical issues.

Remove any question that does not serve that decision. In a branching survey, unnecessary questions do not just add friction; they multiply across paths.

Step 2: Choose Your Survey Questions

Once the objective is clear, select questions that guide users into relevant paths without adding noise.

Questions that trigger branching should be closed-ended: multiple choice, yes/no, or rating scales. These create clear decision points for routing. Open-text questions work best as follow-ups within a branch, where the context is already defined.

Example 1: SaaS onboarding experience

Trigger question:

- “How would you rate your onboarding experience?”

- Very easy / Easy / Neutral / Difficult / Very difficult

Branching logic:

If Easy / Very easy:

- “What worked well during onboarding?”

If Difficult / Very difficult:

- “What caused difficulty?”

- Too many steps

- Instructions unclear

- Technical errors

- “At which step did this occur?”

Example 2: E-commerce purchase flow

Trigger question:

- “What was your goal for visiting today?”

- Browse / Compare / Purchase / Support

If Purchase:

- “Did you complete your purchase?” (Yes / No)

If No:

- “What stopped you?”

- “What would have helped you complete it?”

Each question should align with the response that triggers it. Irrelevant follow-ups break the flow and reduce completion rates.

Step 3: Map Out All the Branching Routes

Before you build anything in a survey tool, draw every path on paper or in a flowchart.

Map each question, each possible answer, and where each answer leads. Check that every path has a logical endpoint and that no path leaves the respondent stranded.

Pay particular attention to multi-select questions.

If a respondent selects multiple answers, each with different branching rules, most survey tools will follow the logic of the first matching answer.

Step 4: Make Sure the Survey Is Linear

Skip logic requires a linear survey structure. Respondents always move forward, never backward.

If you allow backward jumps, you risk creating logic loops where answers conflict with an earlier path, producing infinite cycles or broken flows.

Turn off backward navigation when using skip logic. Keep question numbering off so respondents don’t notice gaps when questions are skipped.

Remove the progress bar for the same reason.

Step 5: Design the Survey in a No-Code Tool

With the path mapped out, add all your question screens first, then go back and apply the branching rules to each answer option.

Work top to bottom in your logic tree. Set terminal branches, which are disqualifications and thank-you screens, first. Then connect the middle paths.

If you want a quick visual walkthrough first, here’s a short video on how to create branching surveys:

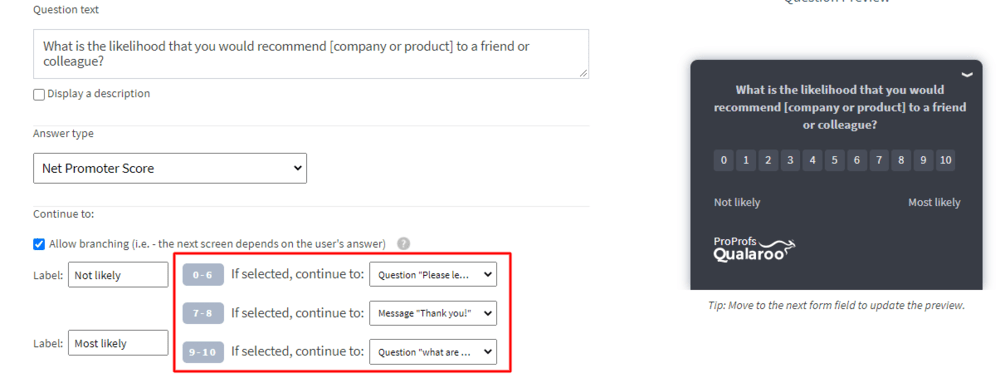

Here is how to do it in Qualaroo:

First, a quick video walkthrough of creating an in-context survey:

1. Add all your questions to the survey first before touching any logic.

2. Use the “If selected, continue to” dropdown on each answer option to route respondents to the correct follow-up question.

3. Click Preview to walk through every path before going live and catch dead ends before your respondents do.

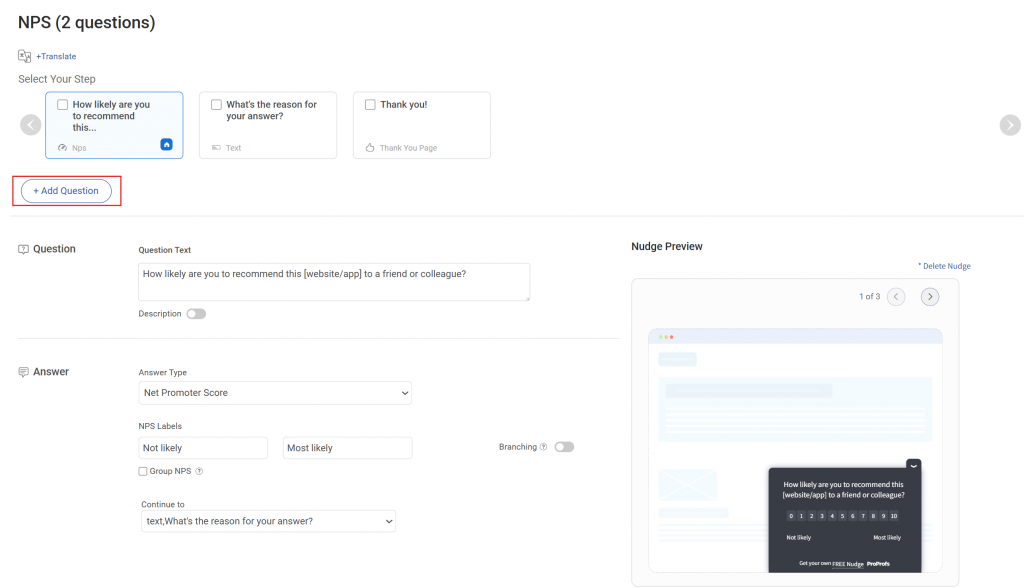

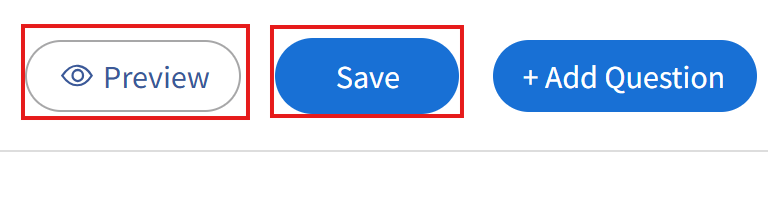

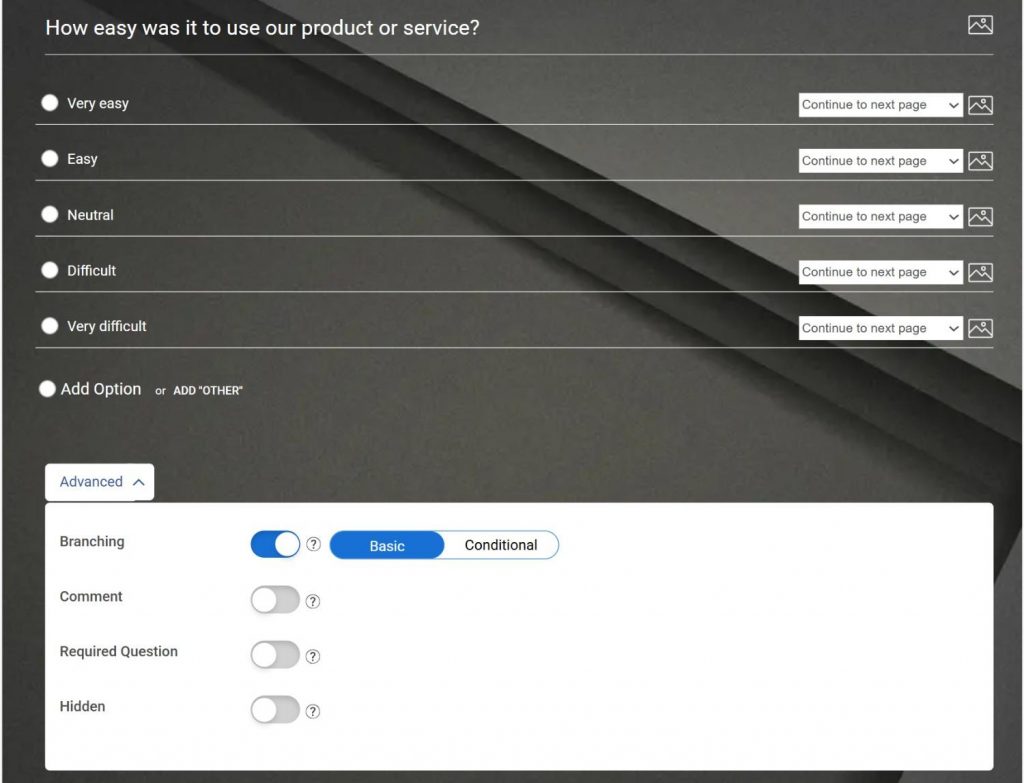

Here is how to do it in ProProfs Survey Maker:

First, a quick video tutorial to show you how to create your survey using AI:

1. Enable branching in the Advanced settings for your rating or multiple-choice question.

Use the per-answer dropdowns to jump to the next page, a specific question, or the submit screen, depending on the answer selected.

For more complex rules, switch to Conditional mode and build your IF-THEN logic there.

Add page breaks to group related questions and keep the flow clean.

Step 6: Test the Survey

Testing is not optional. Preview the survey yourself and walk through every possible path. If you have five answer options on the first question, preview the survey five times, following each path to its end.

Better yet, send the survey to a few team members with different profiles and have them take it as respondents would.

Ask them to flag any moment where the flow felt off, or a question seemed irrelevant to their previous answers.

Fix every broken path before publishing.

Once live, monitor completion and drop-off rates by question, and A/B test your logic structure in your app by comparing one layer of follow-ups to two.

How Does Skip Logic Work? Real Examples You’ll Recognize

Let’s make this real with examples you’ll recognize right away.

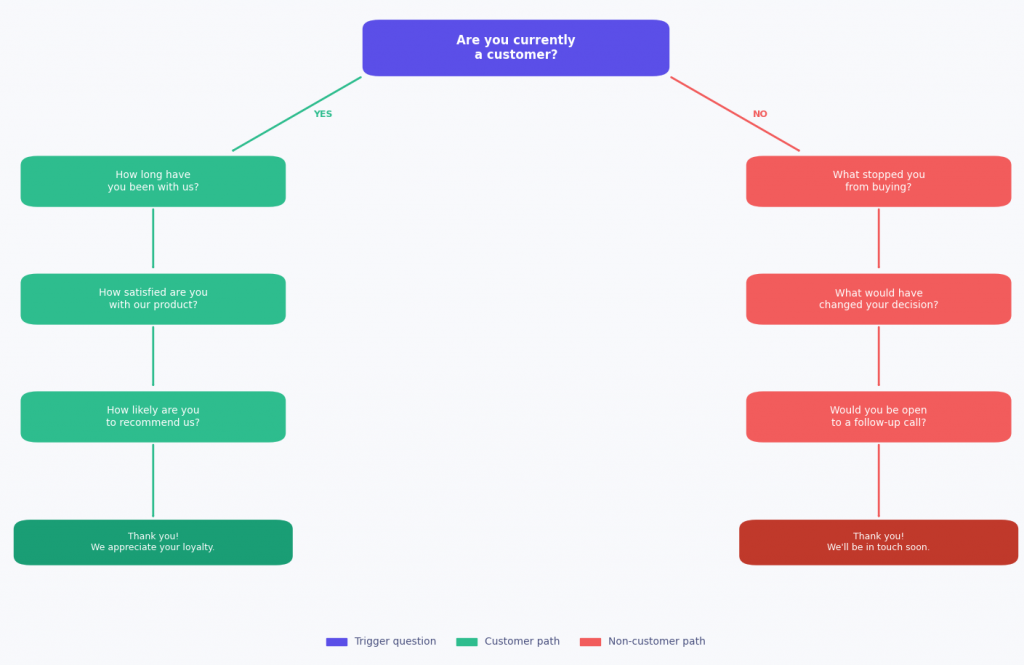

- The Customer Gate (Qualaroo)

This is probably the single most common branch in any survey. You start with a simple qualifier:

“Are you currently a customer?”

If yes, the survey flows into “How long have you been with us?” and then into product satisfaction and loyalty questions tailored to someone who already knows your product.

If no, completely different path: “What stopped you from buying?” The survey then digs into awareness barriers and what would have changed the decision.

The power here is timing. Qualaroo lets you trigger this the moment someone cancels, so the survey fires exactly when the experience is fresh, and the emotion is real.

- The Satisfaction Drill-Down (ProProfs Survey Maker)

You will recognize this one from post-purchase emails and NPS surveys everywhere. The subject line reads: “How did we do, [First Name]?” The first question is simply:

“On a scale of 0 to 10, how satisfied are you with your experience?”

From there, the survey splits three ways:

- Score 9 or 10 (promoter): “What do you love most about your order?” then “Would you recommend us to a friend?” The survey closes with a warm thank-you. Short and respectful of their time.

- Score 7 or 8 (passive): A single follow-up: “What would move your experience from good to great?” One text field, then done.

- Score 0 to 6 (detractor): The survey goes deeper. “What went wrong with your order?” then “How urgently do you need this resolved?” with options ranging from “it can wait” to “I need help today.” The moment they submit, an automatic alert fires to the support team, so someone follows up the same day.

It gathers exactly the right information at each score level without overwhelming anyone.

How Does Skip Logic Improve Data Quality?

When a respondent sees a question that doesn’t apply to them, they don’t skip it. They answer it anyway, usually with a random selection just to move forward.

That random answer enters your dataset as if it were real. Your averages get skewed. Your segment analysis becomes unreliable.

A 2025 report by the IBM Institute for Business Value found that 43% of chief operations officers rank data quality issues as their top data priority.

And the concern is justified: more than a quarter of organizations estimate annual losses exceeding $5 million due to poor data quality, while 7% report losses of $25 million or more.

In survey contexts, this maps directly to forced responses on irrelevant questions: the data looks complete but is systematically wrong, with no way to retroactively identify which answers were guesses.

How Skip Logic Eliminates Satisficing

Beyond contaminated answers, skip logic also removes the conditions that generate “satisficing,” which is when respondents stop thinking carefully and select the first plausible answer just to finish faster.

A 2024 National Institutes of Health (NIH) study on survey design found that response quality drops measurably when respondents perceive the questions as irrelevant to their experience.

When every question clearly applies to them, respondents engage more thoughtfully and provide more accurate, actionable answers. It also reduces survey fatigue.

| Data Problem | What Causes It | How Skip Logic Fixes It |

|---|---|---|

| Skewed averages | Non-applicable respondents answer randomly | Only relevant respondents reach that question |

| Unreliable segments | Everyone answers everything regardless of context | Each branch produces a clean, contextual dataset |

| Satisficing bias | Long flat surveys trigger low-effort responses | Shorter, relevant paths keep respondents engaged |

| Contaminated open text | Guessed answers dilute qualitative themes | Branched paths ensure open text comes from qualified respondents |

What Qualaroo Does With That Data

Qualaroo takes this further by running open-text responses through IBM Watson Sentiment Analysis automatically.

On top of the cleaner structured data your branching paths produce, you also get emotional tone and theme categorization on every free-text answer, without manual tagging or post-processing.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

What Are Advanced Skip Logic Use Cases in SaaS and Product Feedback?

SaaS teams have some of the most sophisticated skip-logic needs because their user base spans multiple distinct segments that use the same product in different ways.

A flat survey sent to all users produces averages that represent no one accurately.

Four Routing Strategies for SaaS Surveys

| Routing Signal | Qualifier Question | Branch A | Branch B |

|---|---|---|---|

| User role | "Are you an admin or end user?" | Admin: access, reporting, team management | End user: usability, daily workflow friction |

| Feature usage | "Have you used [Feature X]?" | Yes: satisfaction and usability questions | No: "What stopped you from trying it?" |

| Subscription tier | "Are you on the free or paid plan?" | Free: onboarding and activation friction | Paid: depth of use and retention risk |

| Onboarding stage | "How long have you been using the product?" | Under 1 month: setup and initial value | Over 6 months: feature requests and advocacy |

Why Role-Based Routing Matters Most

Route by user role first. If your product is used by both administrators and end users, a single survey will be irrelevant for both groups.

An admin cares about access controls, reporting, and team management.

An end user cares about usability and the friction in their daily workflow.

Branching at the first question means each group gets a survey that feels like it was written specifically for them.

The GreatSchools Case: Logic-Based Routing at Scale

GreatSchools, a national nonprofit that helps parents research K-12 schools, used this kind of logic-based routing at scale with Qualaroo.

By placing conditional surveys across different pages of their website, they routed parents, students, and educators to entirely different question paths based on their role and page context.

The result: over 140 targeted insight studies deployed and more than 20,000 responses gathered in under a year, with faster product iteration driven by contextual feedback.

What Are the Most Common Skip Logic Mistakes to Avoid?

When branching is poorly designed, surveys become fragmented, inconsistent, or difficult to analyze.

Instead of guiding users smoothly, the experience breaks down into disconnected paths that produce incomplete or misleading data.

Before building your survey, it is worth understanding where skip logic typically fails and how to avoid those pitfalls.

| Mistake | What Goes Wrong | The Fix |

|---|---|---|

| Logic tree too complex | More branches = more broken paths and testing burden | Keep nesting to 3 levels or fewer |

| Untested paths | Edge cases silently route respondents to the wrong questions | Preview every answer combination before publishing |

| Skip logic on multi-select questions | The tool follows the first answer's rule, ignoring all others | Use single-select questions as branching triggers |

| Question numbers or progress bar visible | Respondents see jumps from Q3 to Q7 and feel manipulated | Turn off numbering and progress bar |

| Backward navigation enabled | Respondents change earlier answers, breaking branch consistency | Disable back-navigation when using conditional logic |

| Skipping the test step entirely | Broken paths corrupt data with no retroactive fix | Test before every launch, no exceptions |

Your Surveys Are Losing Respondents. Here Is How to Fix It Today

The data is clear: irrelevant questions kill surveys. Respondents who feel a survey was not designed for them either guess through it or leave. Neither gives you anything useful.

Skip logic is the mechanism that makes surveys feel personal, because each respondent only ever sees the questions that apply to them.

The completion rates go up. The data quality goes up. And you stop wasting your team’s analysis time on noise.

Here’s how to get started, depending on where you are right now:

If you want to build your first skip logic survey today, Qualaroo’s question branching is available on the free plan.

You can set up a Nudge on your website or inside your product, add branching rules to each answer, and launch in under 30 minutes.

Frequently Asked Questions

What is the difference between skip logic and branching logic?

Skip logic moves respondents forward within one path by bypassing irrelevant questions. Branching logic creates entirely separate question tracks for different respondent types. Skip logic is simpler. Branching logic is necessary when different audiences need fundamentally different question sets. Most platforms use the terms interchangeably.

What is the difference between skip logic and display logic?

Skip logic moves respondents forward to a different question or section entirely. Display logic shows or hides individual questions on the same page without changing the respondent's position. Skip logic changes where you go. Display logic changes what you see where you are.

Can skip logic be used in email surveys?

Yes. When each question lives on a separate page, the logic fires on clicking Next and routes respondents to the appropriate follow-up. The experience feels seamless because they never see the questions they skipped.

When should you not use skip logic?

When your survey has fewer than five questions, and every question applies to all respondents. Skip logic earns its place when meaningfully different segments need different question sets. Adding it to a short, universal survey creates complexity without benefit.

How long does it take to set up skip logic?

A simple survey with one or two branching points takes under 30 minutes in Qualaroo. Complex surveys with four or more branching levels can take a few hours, most of that spent mapping paths and testing every route before publishing.

Does skip logic work for employee engagement surveys?

Yes, and it is especially valuable there. Different departments, tenures, and seniority levels need different questions. Branching by role or tenure at the start lets you collect feedback specific enough to act on for each group, rather than averaging responses that represent no one.

How does skip logic affect data analysis?

Each branch produces its own response set. Filter your data by branch path when analyzing results. Running averages across the full dataset mix responses from incomparable paths. Most survey tools show blank or N/A for skipped questions, which is expected and correct.

What is disqualification logic, and how does it differ?

Disqualification logic routes respondents who don't meet your criteria to the survey end immediately, marking them ineligible. Skip logic routes qualify respondents through different paths based on their answers. Disqualification is a specific application of skip logic used at the screening stage.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!