Now that we’ve discussed the components that make up an effective survey plan—the who, how, and what—let’s move on to the analysis of the feedback resulting from that plan. Though to varying degrees marketing surveys tend to be more about qualitative responses that quantitative data, there are some important metrics to keep an eye on.

Survey Response Rate

One of those numbers is your survey’s response rate, or the percentage of people who respond to the survey.Your survey participants are the people who had the chance to complete a survey. For a custom or Net Promoter survey, the number of “Participants” is how many people you emailed the survey to. With page-based surveys, your “Participants” number is how many people were shown the survey on your website.

For Example...

Your on-site survey was shown to 500 visitors on your website. Of that number, 65 completed the survey, giving you a response rate of 13%. But is that good or bad? You want your response rate to be high because it means your data is more statistically significant—but what constitutes a high response rate?

The email open rate is can vary between 24-45% for different industries with the click rate of only 1-3%. For on-site surveys, response rates tend to vary depending on factors such as where the question is asked and whether the questions are open- or closed-ended.

For example, an exit survey will have much lower response rates than one asked to a highly engaged visitor. Furthermore, as we discussed in the previous chapter, closed-ended questions tend to have higher response rates than open-ended questions because they ask a little less of participants.

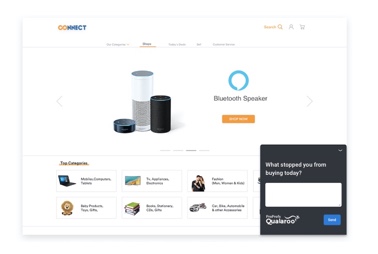

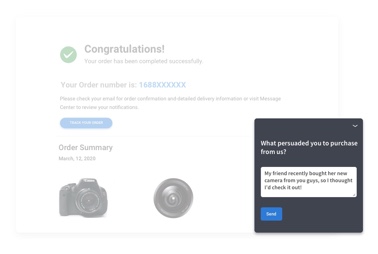

As a general rule, a 5-20% response rate is considered good for on-site surveys. Qualaroo's in-context surveys typically achieve higher rates (10-30%) due to their immediate placement within the user experience.

In addition to survey type, another important piece to the puzzle is your relationship with survey participants. Are these strangers visiting your site for the first time? New customers? Users who have been with you for years? It seems obvious, but the level of intimacy between your company and participants has a positive correlation with response rates.

Other Types of Survey Data

Besides response rate, the most next place to look in an analysis are the actual questions. Different types of questions come with different considerations that you should take into account. Most survey questions can be grouped into 4 types: categorical, ordinal, interval, and ratio.

Categorical Data

Categorical data allows the survey taker to answer using a list of specific names or labels. For example:

"What do you like most about our product?"

- Customer Service

- Ease of Use

- Price

Categorical data is popular because it is the easiest type to analyze. Simply collect, count, and divide. However, the categor ies to include need to be understood before the survey is put together. So, for example, if you don’t know which dimensions are important (e.g. customer service, price, etc.) you can start with an open-ended question, then in a follow-up survey, use categories that reflect the most popular answers. Creating smaller iterative surveys is always better than an over-engineered survey that doesn’t ask the right questions.

Ordinal Data

Ordinal data is any type of question where the responses only make sense as an order. For example:

“How much do you use our product?"

- Never

- Rarely

- Sometimes

- Often

- Always

One important consideration when analyzing ordinal data is being cognizant that order matters. If it’s possible, randomly flip the order of answers for each survey taker.

Interval Data

Interval data needs to be ordered and the distance between the values needs to be meaningful. For example, an interval data question would be, “What is your budget?” where the answers would be a predetermined set of prices like “<5k, 10k, 15k”.

Interval data is useful for segmenting your participants and serving them only questions that are relevant to them. For example, if a user selects a “<5k” budget, you can ask them questions for your Small Business product.

It’s best to use equally-sized intervals if possible because it makes it possible to use averages on the data and more clearly visualize the summary of the data. If intervals aren’t equal sizes, then it should be treated as categorical data.

Ratio Data

Ratio data is the richest form of survey data but asks the most from participants. Anything that is a precise measurement is ratio data. For example, a ratio data question would be “What is your exact budget?” and the input field would allow for any numeric response such as “$501”.

Ratio data is the best choice If you want to calculate averages or measures of variance like standard deviation. This is because the other types of data cannot be represented as fractions, which means that they generally cannot be averaged or turned into variances.

Understanding the Numbers

Now that you’ve gotten a handle on the types of data and what kind of analyses can be performed on each type, it’s time to go deeper into benchmarking, trending, and comparing data.

Let’s say that on your event feedback survey you ask, “How satisfied were you with the event overall?”. Your results show that 80% of attendees were satisfied with the event. That sounds pretty good on its own. But what if last year’s satisfaction levels were at 90%? Or what if the average satisfaction for events in your industry was 95%?

If you were able to ask this question in last year’s event, you would be able to make a trend comparison. But, if you don’t have data from last year, you could make this the year to start collecting the same feedback after every event. This is called a benchmark, or longitudinal data analysis.

In order to draw conclusions from a benchmark, it’s important to distinguish meaningful changes from noise. That’s where statistical significance comes into play.

What is statistical significance?

Statistical significance is a way to measure how likely a result would have happened by chance vs because of the treatment given in an experiment (or in this case, because of something you asked about in the survey). A result that is statistically significant is very unlikely to have occurred by chance, especially if it has a high confidence level. Drawing an inference based on a result that is not statistically significant is risky because it means that the results could just be noise.

How do you figure out statistical significance for a survey?

Making sure your survey results are statistically significant requires preparation and cannot be done after the fact. So if you are reading this and have already run a survey without going through the steps below, you may need to create a follow-up survey. Luckily, the process is pretty easy:

- Understand how many people are in your target audience - This is simple. Your target audience could be all of the users of your product, or even broader. For example, all of the potential buyers of your product in the U.S.

- Calculate the number of people you need - Use an online calculator to find how many people you will need to survey. When you are doing this, you will need to choose a margin of error, which is a measure of inaccuracy expressed as a percentage. If you are alright with a higher margin of error, you will need to survey fewer people.

- Create a representative sample of target audience - You will use the number from the earlier step to choose a random sample of survey participants. The “random” part of this is important. If survey participants are cherry picked to fulfill specific criteria, they will no longer be a representative sample of the entire audience.

A few more points should be taken into consideration when analyzing survey results:

- First of all, consider the purpose of your research. If your goal is to merely gain insight, a high response rate might not be as important—feedback is feedback and all of it contributes to increased understanding of your issue or concern. If you want to measure effects, however, then a high response rate is more critical. For example, you can’t determine if a change you made to your product is actually for the better if the majority of your participants don’t complete the follow-up survey.

- Segment, segment, segment. We can’t overstate the importance of segmentation. You can break down responses based on a variety of variables, such as existing customers vs. inactive customers, dollar amount spent, and so on. The more ways you slice and dice your customers (so long as you segment based on variables meaningful to your business), the more insight you’ll get.

- Study similar respondents using cohorts. Looking at cohorts helps you to account for changes in your traffic and usage patterns over time. You might learn, for example that visitors who came to your site in June from a Groupon offer are less satisfied than the types of regular customers who came in July.

- Avoid averages! Averages give average insights. Segments, cohorts, and eliminating certain responses from your evaluation (like the Net Promoter Score® does) are the best ways to get more clarity from your data.

- Avoid aggregate data. Just like with averages, when data is aggregated it loses its ability to give insights. Stick to more meaningful analysis methods like the ones mentioned above.

- Context is key. When comparing numbers, use ratios to understand context. Is 50% a good number? Only in context to some other number.

- If you are trying to measure effects, it’s also important to understand the difference between descriptive and inferential statistics. Descriptive statistics are just that—they allow you to summarize your data, and typically refer to measures such as sample size, mean age of participants, percentage of males and females, range of scores on a study measure, and so on. Inferential statistics, on the other hand, help you to establish statistical significance by taking into consideration things like confidence intervals and parameter estimations. (Source)

- It’s important to establish a sampling frame. All participants must have equal opportunity to respond, and you should give some thought to how you define the total population from whom you want information, as well as how to take an unbiased sample from that population.

- To eliminate concerns like random error and bias, make the coding and cataloging of data as automated as you possibly can. Many basic survey tools (which we’ll discuss more fully in Chapter 11) will take care of the statistical analysis of your data for you. If you end up using a survey tool that doesn’t, however, consider storing raw survey results in a spreadsheet, which will make exportation to a secondary statistics package (if you decide to use one) easy. For large surveys, a database is probably preferable to a spreadsheet for storing that much raw data.

At the risk of repeating ourselves, we want to remind you that it’s important to stay focused on the purpose of your research. Depending on what you want to do with the data gained from your survey, to varying degrees the points above will be highly relevant or totally inapplicable to your situation.

The same holds true for the the analysis itself. There are several logical ways of making sense of survey results, but, there’s no wrong or right way—only what makes the most sense in the context of what you are trying to accomplish. You might consider…

- Counting the frequency of response to each option.

- Cross-tabulating responses to one series of questions or options against another series of questions or options. (Source)

- Measuring the change in response to a question over time.

- Measuring response by various other attributes—including demographics, cohorts, traffic source, etc

- Comparing metrics important to your business—for example, customers who’ve spent more than $500 with you versus those who have spent $0.

- Anything else that makes sense in light of your user base and end goal.

It’s likely that after analyzing your results you’ll have to also present them in some way. Whether you plan to publish your survey results on your site or distribute them to investors or team members, a basic format to follow is:

- Begin with the headline results or key takeaways.

- Follow it up with an explanation of your process.

- Wrap it up with a conclusion based on the data.

It’s normal (and somewhat unavoidable) for you to form your own opinions about the data. As Usability Net points out, “These are important, and you should present them carefully, marking them as your extrapolations from the data so as not to confuse objective fact with your subjective opinion.”

We’re going to say it one more time: Don’t lose sight of your survey goals. If you find yourself stressing over some minutiae in the data, take a step back and consider what your objectives are. In the grand scheme of things, does the detail you’re hung up on matter? If, for example, you want to improve your landing page and a majority of your users report that your navigation bar is a serious point of confusion, does it matter if this majority is 85.6% or 86%? Probably not.