If it were easy to create remarkable surveys and collect rich insights from flawless research just like that, we wouldn’t be writing this article.

If you’ve ever made survey errors while phrasing the questions, adding answer options, sampling the audience, or leaving biases undetected, you’re not alone.

But can we let that happen again? NO.

So, what can we do? — Equip you with the knowledge of “What not to do,” i.e., the types of errors you must avoid. And even if you make those errors, how you can effectively correct them.

It will empower you to create flawless surveys, collect valuable data, and make the right business decisions.

But first, you need to understand what qualifies as an error.

What Do Survey Errors Mean?

Survey errors are mistakes that may happen while creating the surveys, deploying them, setting the parameters, or targeting the audience.

All errors are so diverse that we will discuss each in detail later in the blog to paint a clear picture of “What not to do.”

It can be anything from a typo error to adding wrong answer options for a question to even analyzing the results wrong.

If you are wondering how consequential an error can be, then get this: A simple error in targeting, arranging, selecting questions, or even how you frame questions can irrevocably damage your feedback data quality and, resultantly, your analysis.

For example, a simple error in framing a question like “How much will you rate your love for XYZ products?” will immediately make customers answer positively.

Why? Because the word “love” leads respondents to believe you are looking for a positive response.

Consequently, the responses you will get to this question will be biased and not authentic.

Does it not defeat the whole purpose of creating a survey in the first place?

So, now you know why you should familiarize yourself with these errors even before you make them.

26 Types of Survey Errors to Avoid

Instead of just mentioning the survey errors, we have segregated them for your convenience. It will help you categorize these errors at once, so when you design your survey, you can be cautious of these sneaky errors at each stage.

Survey Question Errors

This section will cover all the significant survey errors commonly made while selecting and phrasing questions.

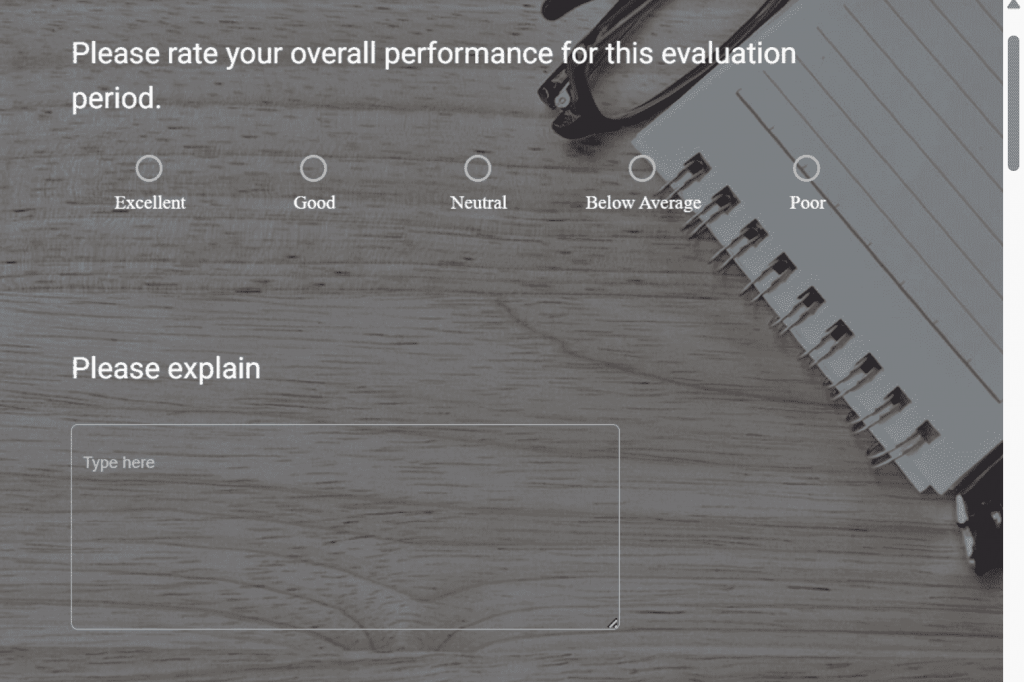

1. Asking Too Many Questions

Imagine this: You land on a website, accept to take a survey out of curiosity, and BAM! It’s a never-ending question-fest.

Adding too many questions to your surveys can make it frustrating for respondents, irrespective of your genuine and sincere intentions to collect as much data as you can.

Example: You are creating a survey to explore the marketing channels that work for you to bring new leads and traffic.

For this, you finalize a series of questions that you think will give detailed insights. But, somewhere in the process, you lose sight of what to ask and add a bunch of questions you momentarily think would make sense.

But in reality, they are just baggage to your survey, and you may collect precise feedback even if you don’t include those questions.

How to avoid: Once you have all the questions listed down, think about the kind of answers they will fetch. Don’t include those questions if you think the responses won’t add to your required data.

You can also employ skip-logic to ensure that you only ask the right questions to the right customers.

And if you feel that all the survey questions are required and cannot be eliminated, try dividing the questions into short surveys that are easy for customers to take. You can add them at different touchpoints in your customer journey.

Or you can start with the end in mind — decide the survey goals and map the questions to these goals to eliminate the ones that do not fit anywhere.

2. Confusing Single-Choice and Multiple-Choice Questions

It’s common to get confused between single-choice and multiple-choice questions or MCQs while designing surveys.

Single choice questions have multiple answer options but only one correct answer. On the contrary, multiple-choice questions let you select more than one option.

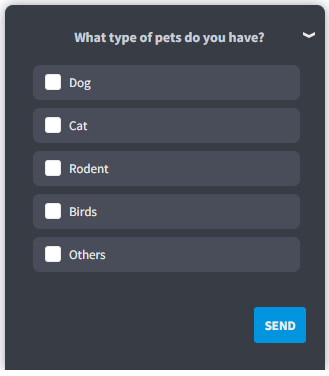

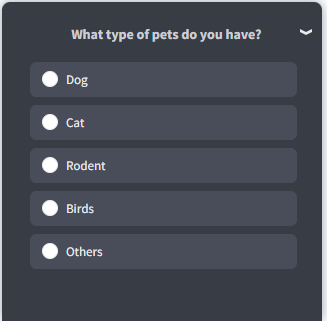

Example: Suppose you are a hotel chain that wants to introduce pet grooming services. You plan to create a survey asking customers the kinds of pets they have to map out your audience type and target population.

So, you first ask a question “Do you have pets?” and only the customers who answer ‘yes’ will move on to the next question.

Now, you have a question like “What type of pets do you have?”

Ideally, you should add a multiple-choice question here with checkbox answers so that customers with more than one type of pet can answer correctly, but instead, you add a single-choice answer option with a radio button.

MCQ Answer Option

Radio Button Option

How to avoid: Once you know how to ask for specific feedback, think about the responses you can get.

For example, in the above question, you need to think of the customers with more than one pet.

When you rightly anticipate the type of responses you can get, it’s easier to decide which answer type is ideal for your survey question.

You can also use branching logic and add a comment box to responses so that customers can elaborate their choices, and you will get specific feedback.

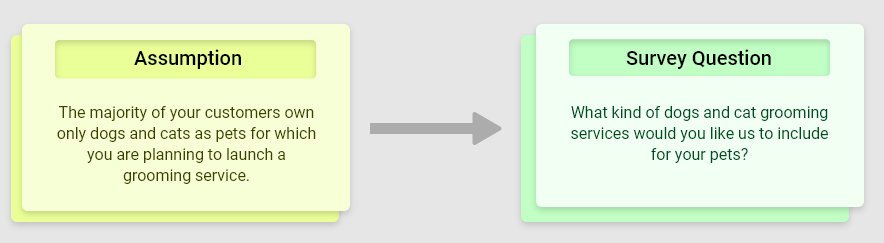

3. Framing Assumptive Questions

These are framed based on some assumptions of the researcher.

These questions make assumptions based on what the researcher knows instead of the respondents’ level of knowledge who’ll take the survey.

That’s why they may make sense to the researcher while the respondents could feel lost

Let’s take the hotel chain example.

Example: Suppose you want to expand your business by launching an app for Apple OS first and exploring features customers will want.

So, an assumptive question would be directly asking what customers expect from your iOS-based app, even before asking them if they even use iOS or another operating system.

How to avoid: To identify assumptive questions, you need to review all the questions and see if the context for your assumption traces back to the previous question or not.

You can also ask an unbiased person to review your survey, who matches your customer demographic and profile.

4. Going Overboard with Open-Ended Questions for In-Context Feedback

Don’t get us wrong. We love some insight-rich open-ended questions as much as the next person.

But, as they say, excess of anything is bad!

It’s already a challenge to get more customers to take the surveys, and if you add more than the required open-ended questions, it’s nothing but adding to the challenge.

Example: Open-ended questions require customers to write detailed answers. Suppose you want to know what customers think of your website and their experience navigating it.

Of course, you’d want a detailed account of each feature to understand the kind of customer experience you offer, but it’s not ideal for your customers.

More open-ended questions take more effort and time to answer, which are not the ingredients of successful surveys with high completion rates.

How to avoid: You can add multiple close-ended questions with a follow-up question to collect in-context feedback. Make sure to add a skip option so that customers don’t feel pressured into answering questions when they don’t want to.

Another thing is that open-ended questions are suitable for qualitative research vs. quantitative research, which requires closed-ended questions.

Watch: How to Create In-Context Surveys

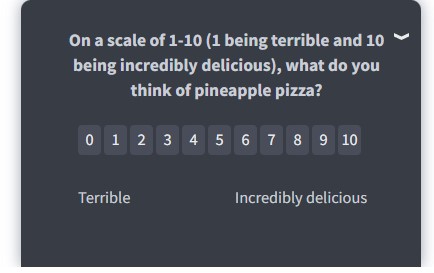

5. Framing Leading Questions

Sometimes you might unintentionally frame survey questions in a way that these reflect your bias for an ideal response. This bias may lead respondents to answer in a way they think you favor and skew the feedback data.

Do not lead your participants into answering a certain way with your question. For instance, adding “right?” in your questions is a form of validation of what you are asking. And users may choose “Yes” as a favorable answer. But it’s not the only way.

Example: Here’s what a leading question looks like:

“On a scale of 1-10, how much will you rate your love for pizza?”

Using vocabulary like “love” may lead the respondents to think that you have a bias for Pizza and favor responses with high scores on the survey.

Here’s how we can reframe this NPS survey question to remove the unintentional bias:

“On a scale of 1-10 (1 being terrible and 10 being incredibly delicious), what do you think of pineapple pizza?”

How to avoid: Avoid using vocabulary that conveys specific emotions and try to frame neutral questions.

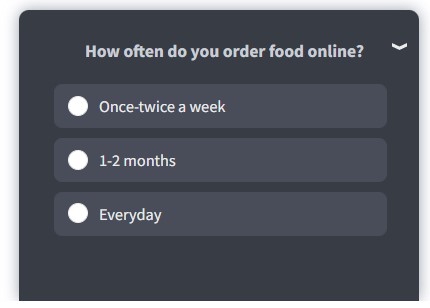

6. Using Absolutes in Questions

Absolutes such as “Every,” “All,” “Ever,” “Never,” “Always” can affect the way respondents answer your questions and they may share a biased response. Absolutes make your questions rigid, making it harder for respondents to give genuine feedback.

Example: Asking questions like “Do you always order your food online?” will pressure participants into answering ‘No.’

Questions with absolutes are dichotomous, i.e., have only ‘Yes’ and ‘No’ as answer options.

However, dichotomous questions don’t always carry a bias. They help segregate the respondents effectively.

For example, simple questions like “Do you have any pets?” will always receive better answers if you use dichotomous answer options.

How to avoid: Instead of using absolutes, you can frame questions without them. Let’s correct the above-used sentence and frame it without absolutes.

It would go like “How often do you order food online?” with some appropriate answer options.

Sampling Errors

These are the errors in surveys that occur while defining the targeted survey audience.

7. Population-Specific Error

As the name suggests, population-specific errors in surveys occur when the research cannot explicitly specify the target audience for the survey.

It is also called the Coverage error, as specific demographics are left out as participants.

With an unclear target audience, survey questions become random without any specific end goal in mind. This makes researchers clueless about what kind of feedback they want.

Such feedback will yield unreliable and skewed results.

Example: Suppose you want to target online gamers and choose a sample of the population between the 15-25 age group.

If you continue with this sample population, you might not get the best results since most people in this age group might not be making their own financial decisions yet.

You will also be excluding other age groups that are online gamers and have a high spending capability.

How to avoid: You need to assess the objective of your survey and then carefully choose the right sample population, ensuring you aren’t missing any key demographics.

In the example above, the best solution is to choose people in 18-35 age groups who are financially capable and interested in the gaming sector.

Watch: Advanced Survey Targeting Tips

8. Sample Frame Error

A sampling frame error is when an inaccurate target sample is chosen from the population. It tampers with how you design your survey and ultimately generates erroneous results.

Example: Suppose you own a clothing brand and want to launch a collaboration collection.

You pick out a population sample from the high-gross income bracket. Now, you may get luxury brands as suggestions since that sample group can afford such goods, but it doesn’t reflect the choice of your entire customer base.

How to avoid: You should try random sampling to minimize the occurrence of such sampling errors.

FUN FACT

How to avoid: You should try random sampling to minimize the occurrence of such sampling errors.

One of the most popular sample frame errors occurred during the presidential election 1936 in a Literary Digest poll.

The poll predicted Alfred Landon to win, but Franklin Roosevelt won instead with a significant margin. The survey error happened because most of the subscribers of Literary Digest were from the wealthy class and tended to be Republicans.

9. Selection Error

Selection error occurs when only the respondents interested in the survey respond and volunteer to participate, even if they are not a part of the sample you need.

Example: You only rely on the responses from a small portion of respondents who respond immediately and exclude customers who don’t.

This way, you’ll get insights from a small group of respondents and miss out on crucial information that non-responders could have given you.

How to avoid: You can eliminate this error in two steps:

Step 1: Encourage participation in the surveys so that you have the right sample size for your survey. You can incentivize your surveys and approach the participants pre-survey to ask for their cooperation.

Step 2: Screening questions or screeners are great to eliminate unsuitable participants. You can create a pre-survey with these screeners to filter out unwanted respondents.

10. Non-Response Error

Non-response error gets its name from a situation where selected respondents from a sample do not respond. There could be n number of reasons why customers don’t reply.

A non-response error may lead to over or under-participation of specific demographics, affecting the results.

The lack of response also means that you won’t have the required sample size to establish the statistical significance and reliability of the data.

Example: Let’s say you send a long-form survey to the respondents via email, and some of them don’t respond. Then, you will end up with responses from a specific demographic, like having only a part of the puzzle.

How to avoid: You can send follow-up surveys and remind respondents to take the survey, to get homogenous data sets from all the targeted audience segments.

11. Sample Insufficiency Errors

Errors such as non-response errors leave out an essential part of the targeted demographic, resulting in sample insufficiency errors.

Example: If you conduct a survey and the target audience responds, the sample and its feedback will be insufficient.

How to avoid: Besides sending follow-up emails, you need to determine why respondents don’t respond:

Are some of your survey questions inappropriate?

Are they not articulate enough?

Is the survey too long?

Carefully design your surveys and choose a large sample to avoid survey errors. This way, if some of the respondents don’t reply, you will still have many responses.

There are multiple online free sample size calculators to help you figure out the required responses for your survey.

Response Bias

Response bias refers to different biases survey respondents may have, potentially affecting how they answer the survey. Here are some of the response biases and tips to avoid them.

12. Order Bias: Primacy, Recency, Contrast, and Assimilation Effects

How you order your answer options can significantly affect the responses. The arrangement of answer choices can spawn two response bias types: Recency and Primacy bias.

Recency bias is when respondents the last option from the list of answer options as it strikes them as the ‘most recent,’ so they remember it well.

Primacy bias is when respondents choose one of the first options as they did not go through all the choices. It mainly happens in MCQ-type questions.

Example: Suppose you ask respondents “What is your favorite series from XYZ OTT platform? ”

Now, chances are that customers will either choose the first option since they don’t want to read all options, or they may choose the last option since they read it most recently.

The order of questions can also have two other effects on respondents: contrast and assimilation.

The contrast effect is when the responses differ greatly based on the order of answer options, and assimilation is when responses tend to be similar.

Example: Suppose you ask your employees about their issues with the reporting manager and then ask if they are happy in the workplace.

Now, there is a possibility that they would have answered the questions differently if the order had been reversed.

Their second answer will be influenced by the answer to the first question.

How to avoid: The best way to combat these errors is randomization. You can randomize your answer options for every respondent so that each option has a fair chance of being picked.

As for the question order bias, make sure that your questions don’t affect the answers to the following questions.

13. Not Making Sensitive Surveys Anonymous

When survey questions are personal for respondents, they tend to be untruthful while answering. Either they don’t want to disclose the truth, avoid negative consequences or try to impress the researcher by answering the way they think is favored.

Example: Suppose you create a survey asking your employees about the shortcomings of their respective teams and what they need to improve.

The chances are they will not answer the truth as they may fear consequences.

How to avoid: You can make your surveys anonymous so that respondents can answer honestly without risk of being exposed. Yes, doing so will affect accountability, but it’s better than having not-so-genuine answers.

Design Errors

Design errors are the survey errors made while designing and putting together the survey. Let’s see what they are and how you can avoid making those errors in your surveys.

14. Adding Too Many Answer Options

It’s one thing to offer sufficient choices for respondents to answer, but totally different to add more than necessary choices just to give more options. The ‘Choice paradox’ states that the more choices a person has, the more difficult it is to choose.

Example: You must have experienced something of the exact nature when you go shopping for something and just stare at the never-ending shelves and aisle of different versions of the same product.

In the same way, adding too many choices to your surveys may confuse the respondents, and they might end up choosing an option randomly, skewing your results.

How to avoid: Instead of providing every possible answer option, you can add the “Others” option with an open-ended question like “Can you please specify” to collect their in-context feedback.

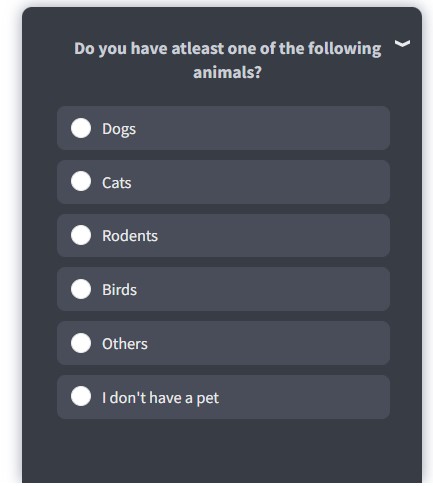

15. Not Enough Response Options

Although close-ended questions are great to generate structured data sets by allowing only pre-defined options, it only works when your answer options cover all the possible responses from the target audience.

In this case, respondents may choose an answer randomly, affecting the data reliability.

Often, surveys have good questions, but the choices are inadequate due to the shortsightedness of the researchers.

It results in insufficient feedback data as respondents pick the closest option to what they want to answer, but it’s inaccurate.

In the worse case, they may abandon the survey altogether.

Example: Continuing the hotel chain example, say you don’t add options like ‘Others’ and ‘I don’t have a pet.’ Respondents with pets other than those mentioned in the survey have no way of answering.

How to avoid: If you are unsure what options you should add, the best thing to do is add an “Others” option followed by a follow-up question using branching logic to allow respondents to add their actual responses.

16. Using Jargon and Unfamiliar Language

Using complicated language in your questions will make it harder for respondents to understand the meaning, which will affect their ability to answer honestly.

Complicated vocabulary such as technical jargon is not commonly used in conversations and may confuse the respondents.

Example: Let’s assume you host a SaaS-based customer feedback software, and you want to ask newly joined customers about their feedback on your product’s features.

So, while creating a survey, if you use vocabulary such as ‘customer experience software,’ respondents may not get it in one go as they might be more familiar with words like feedback or survey software.

Another example is the complicated sentence structure.

Complicated Language: What was the state of the cleanliness of the room?

Ideal Language: How clean was the room?

How to avoid: Try to keep the language of the surveys as understandable and straightforward as possible.

Avoid using complicated sentences and frame them in the active voice with day-to-day used vocabulary.

If you can’t avoid jargon or technical words, you can add a little note explaining their meaning.

17. Not Giving Any Opt-out Option

Another survey error researchers make is not making the questions optional or skippable. Not all customers will want to answer all types of questions in your survey, especially if they are sensitive.

Questions about race, income, ethnicity, religion, and more are considered sensitive. So, if you don’t give them an option to avoid the question, they will either leave the survey or answer randomly.

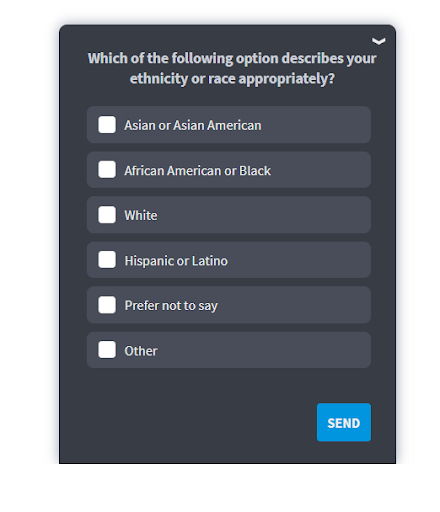

For Example: Say you are asking customers, “Which of the following option describes your ethnicity or race appropriately?” and you don’t provide an option for not answering or skipping the question.

How to avoid: Make sure to make the question optional or give them an answer option like “I prefer not to say.” You can also add the skip logic so customers can move on to the next question.

Data Collection Errors

Data collection errors occur when collecting feedback through the surveys, but their solution lies way before in the process. Let’s have a look at some of these errors:

18. Generalized Questions Without Segmentation

Asking every respondent or customer the same question without considering their position along the customer journey is one error that makes you look unprofessional, and it’s frustrating for the respondents/customers.

Example: Suppose you ask a question in your survey “What was your first impression of our website?”

Although this question is perfect for market research to ask your first-time visitors, it won’t make sense to your regular customers.

How to avoid: Before you start designing your survey, understand how many and what customer segments you want to target. Create a survey flow on which questions you will target towards which customer segments.

For doing this, you need a tool like Qualaroo, a customer feedback software that allows you to design delightful surveys and offers features like advanced targeting.

It will allow you to segment prospects from regular customers so only the new customers can see the survey.

For example, if you want to target visitors on the pricing page, you should ask them what they are looking for, offer them a demo, or ask them if they would like to talk to your sales representative.

19. Measurement Errors

Measurement instrument errors are discrepancies in the data collected through the survey conducted.

This type of error can occur due to various elements of the survey campaign like respondents, interviewers, survey questionnaires, and mode of data collection. It is known as a type of nonsampling error.

Example: When a researcher adds unsuitable survey questions that prevent respondents from offering objective feedback.

How to avoid: You can measure the survey results by comparing them with a previous similar campaign.

If you want to avoid this error entirely, check every aspect of your research. It includes everything like survey instruments, your sample for participants, and targeting the right people for your survey.

20. Redundant Questions

One sure thing that drives respondents to abandon a survey is repetitive questions that don’t make sense.

You need to be very specific on what information you are asking for and ensure that you don’t repeat it in the survey.

Example: You created a survey and required respondents to enter their email address to take the survey, and then again, you ask them to enter their contact details, including email ID, in the survey.

Respondents will feel frustrated as they have to repeat information and may even abandon the survey.

How to avoid: Review your process and survey questionnaire to ensure nothing overlaps in order to reduce survey fatigue.

Survey Accessibility Errors

Designing a survey campaign without considering multiple channels your participants may use creates a survey accessibility error as the survey is not optimized for each medium.

21. Not focusing On Mobile-Optimized Surveys

Most of the processes and industries have turned online, and consequently, mobile-optimized solutions are the need of the hour. Most people use their smartphones to interact with your brand, so making your surveys mobile responsive is crucial.

Example: Suppose you design a non-responsive survey and target participants who use mobile devices. Since the survey is not mobile-optimized, the participants won’t be able to read it properly, and you will face a high survey abandonment rate.

How to avoid: Ensure that your survey is compatible in-app, on-site, and across all mobile devices so that the respondents can answer at their convenience.

22. Inconsiderate Multimedia Usage

If this article did not have any images or videos, you would have found it monotonous, no matter how insightful it is, right?

Just like with content, adding multimedia to your surveys is a great way to make them more engaging.

You also need to consider different scenarios when some participants may not be able to access them. Your feedback data gets compromised in those situations as your participants won’t answer authentically.

Example: Say you add images to your survey, but some of your participants have a faulty internet connection and can’t load the images. Here, they will either abandon the survey or answer randomly.

How to avoid: Add multimedia that is not heavy in size and can be loaded quickly on every device.

Marketing-Related Errors

Marketing-related errors are the mistakes you might make while marketing your survey to the targeted audience. Let’s discuss these errors with their possible solutions.

23. Not Adding Introduction to the Surveys

Introductions are crucial for research surveys. They help respondents understand the purpose of the survey and what it aims to achieve through the feedback.

Pop-up surveys or short-form surveys do not require introductions since they are not as time-consuming or commitment-oriented as long-form research surveys.

Example: Suppose you want to map customer behavior and preference for online food delivery.

If you start asking questions right off the bat without offering any background information about the survey, respondents might have reservations about answering the questions.

Why? Because your intent for collecting feedback and their information is not clear yet.

How to avoid: Analyze what information you want to gather from respondents. If you think you are asking for personal data, it’s important to explain every important detail about your survey.

Some points to add to your introduction are:

- The time it will take to complete the survey

- Purpose of the survey

- How you will use the data.

24. Forcing Customers to Take Your Survey

It’s only natural to market your survey across different platforms and encourage customers to take it, but it’s not ideal to push your customers for it.

Examples: Sometimes, many businesses forget the boundaries between encouraging customers to take the survey via emails or blogs and forcing the surveys on their faces by sending emails incessantly.

How to avoid: Through advanced targeting, you can ensure that customers who aren’t interested in taking the survey have an option to say so, and based on that, you can exclude them from further follow-up emails.

Try to find the balance between motivation and intrusive marketing for your surveys so you don’t lose your customers.

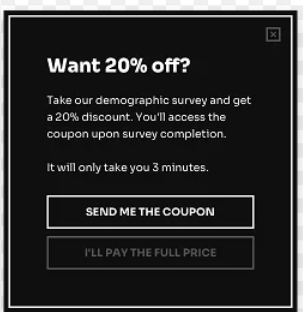

25. Unclear and Vague Incentives

Incentives play a big part in convincing someone to take some time and answer a survey. Of course, you can’t expect people to just share their feedback out of kindness; they need to know that they’ll get something in return for their effort.

Example: Some companies send their surveys via emails with a very catchy subject line announcing “A surprise” awaiting them once they take the survey.

Using such ambiguous incentives only makes the participants skeptical of the survey, and they don’t entertain it anymore.

How to avoid: Always specify whatever incentives you have prepared for the survey takers, be it discounts, gift cards, coupons, or anything else.

If you are upfront with your offerings, participants will be more attracted to take the survey as they already know what they’ll get in return. It will improve the participant/customer engagement as well.

You can add the discount information into your survey introduction or headline. For example, take our 1-minute demographic survey to get 20% off on your next order.

26. Implementation Errors

The whole purpose of creating surveys and collecting feedback is to use those valuable insights to develop and improve your products and services.

Not doing so is like sitting on a pile of gold and still counting pennies.

All those hours you spent creating a flawless survey and collecting customer feedback will be wasted if you don’t act on the data you get.

But first, you need to know how to derive actionable insights from the data. For this, you can use advanced data analysis methods like Sentiment Analysis.

It is a technology that analyzes all the survey responses and highlights the most used words and what emotions they represent towards the brand.

You can also get customer insights from Net Promoter Score reports that give you a detailed view of your feedback data.

Ways to Test and Resolve Survey Errors

Testing is a sure-fire way to ensure you do not launch a survey with multiple errors, at least not marketing and questions related.

So, here are a few things you need to keep in mind while testing your surveys for quality assurance.

Review Your Survey Design Before Publishing

Once you are done with your survey, review it thoroughly for errors.

These errors can be anything from measurement instrument or question types errors, i.e., whether you are conducting qualitative or qualitative user research to choosing open and closed-ended questions incorrectly.

Since surveys are not a one-time affair, making the survey review process a part of your campaigns is imperative. For this, you can A/B test your surveys to see which versions perform better and then launch the better version.

You can also test surveys on a live website to a sample audience with whitelisting and cookie targeting.

Optimize Surveys and the Process

Not just your surveys, but the entire data collection process should be user-centric and friendly.

For example, remove any redundant questions or steps involved in the survey to avoid survey fatigue and lower the dropout rate.

Implement Guerilla Testing

Guerilla testing is when you ask a small group of people that represent your targeted audience to take your survey and share their experience.

You can ask some in-context questions like the time taken to complete the survey, what they think about your questions and their choices, if the language is simple to understand, etc.

[Read more: A Beginner’s Guide to Guerrilla User Research]

Run Response Data Tests

Of course, you want to store all the survey responses on your preferred platform. You should run a test response to ensure your responses are getting saved alright on the Cloud platform of your choice.

For this purpose, choose a survey tool like Qualaroo that allows you to store your responses on the cloud. Another option is to integrate the survey tool with any third-party platform.

For example, Qualaroo offers integration with Zapier that allows you to integrate with different apps for different purposes.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

Start Creating Flawless Surveys with Qualaroo

Now that you know what errors you need to avoid in surveys, it’s time to show your creativity and design your surveys to collect reliable, in-context feedback with Qualaroo.

This customer experience and feedback survey platform allows you to create surveys from scratch and even offers survey templates to collect valuable feedback in minutes.

While creating surveys, the tool ensures you don’t make design errors as you can preview each of your question frames in real-time.

It also helps you target the right audience at the right time with its advanced targeting, so you don’t make some of the data collection and sampling errors mentioned above.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!