A mobile app survey is a short, in-product questionnaire triggered by user behavior inside your iOS or Android app. It appears right when users experience something, not hours later in their inbox when the memory has already faded.

In my experience working on mobile products, most apps silently bleed users. There’s no complaint, no angry review, no farewell message. Users hit something confusing, lose confidence, and quietly stop coming back. Your analytics can show the drop-off points, but they rarely show the reason behind them.

That is exactly what a mobile app survey is built to catch. Friction, confusion, and unmet expectations can surface while users are still inside the product, when there is still time to understand and fix the issue.

In this guide, I’ll walk you through how to build, trigger, and analyze mobile app surveys that actually move the needle.

Why Is Your Current Feedback Program Making Your Retention Problem Worse?

Here is a scenario that plays out across product teams every week. I have seen it more times than I can count.

A user hits a confusing screen, closes your app in frustration, and moves on.

Six hours later, an email survey lands in their inbox. If they open it at all, the frustration is gone, the context is gone, and you get a vague “it was fine.” More likely, you get nothing.

You have now spent time and budget on data that will not help you fix a single thing. And the user? They are already drifting.

Every day you run feedback programs on stale data is a day you are making product decisions without knowing what is actually broken. That compounds fast.

Here is what the gap looks like across channels:

- Email surveys sent after the session yield emotionally detached responses. The experience is no longer fresh, so the feedback is no longer useful.

- Exit-intent triggers rely on cursor movement toward the browser tab. On mobile, that signal does not exist.

- Generic overlay-style surveys that look like third-party tools break immersion and signal to users they are being tracked, not heard.

The cost here is not just low response rates. It is product decisions made on the wrong data, friction that never gets fixed, and users who churn without ever telling you why.

How Do You Create a Mobile App Survey That Users Actually Complete?

The biggest mistake teams make is treating a mobile survey like a web form shrunk to a smaller screen. The interaction model is completely different. If you design for desktop and hope for the best on mobile, you will lose most respondents before they finish.

Before getting into the steps, a quick note on tools. I’m using Qualaroo as the reference here because it lets you trigger contextual surveys and launch or adjust them without waiting for a full app release cycle. That makes it practical for running feedback experiments quickly instead of planning them months ahead.

Here is how I approach building a mobile survey that people actually complete.

1. Start with the decision, not the question. What will actually change in the product based on what users tell you? If you cannot answer that clearly, do not send the survey yet. Surveys without a decision behind them are just noise.

2. Match the trigger to a specific moment. Task completion, first-time feature use, a frustration signal, or a session milestone. The trigger is what makes the feedback contextual and worth reading.

3. Pick one metric and stick to it. Use CSAT for interaction-level satisfaction, NPS for long-term loyalty, and CES for effort and friction. Mixing metrics in a single survey gives you data that is difficult to act on.

4. Write one to three questions, maximum. Every additional question costs you a response rate on mobile. If you need more feedback, run a second survey at a different trigger point.

5. Design for the thumb, not the cursor. Tap-friendly answers, clean layouts, and UI that matches your app. This is not optional polish. It is often the difference between a 35% response rate and a single-digit one.

Once you have the survey idea and trigger defined, creating it in Qualaroo is straightforward.

Step 1: Click Create New in the Dashboard

From your Qualaroo dashboard, click Create a Nudge in the top-right corner to start building a new survey.

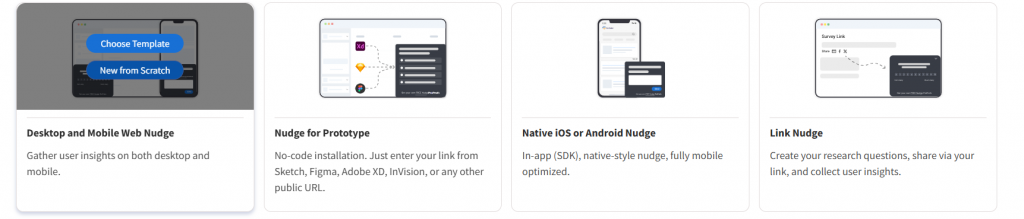

Step 2: Select Desktop and Mobile Web Nudge

Inside the dashboard, choose Desktop and Mobile Web Nudge. Then select Create From Scratch if you want to build a survey without using a template.

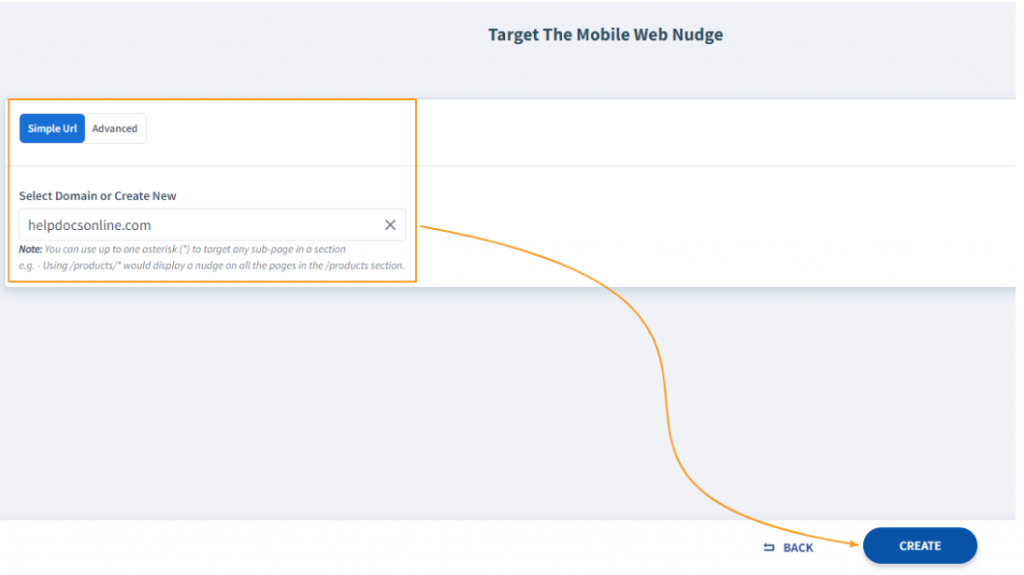

Step 3: Enter Your Domain and Create the Survey

Add the domain where the survey will appear and click Create at the bottom of the screen to open the survey editor.

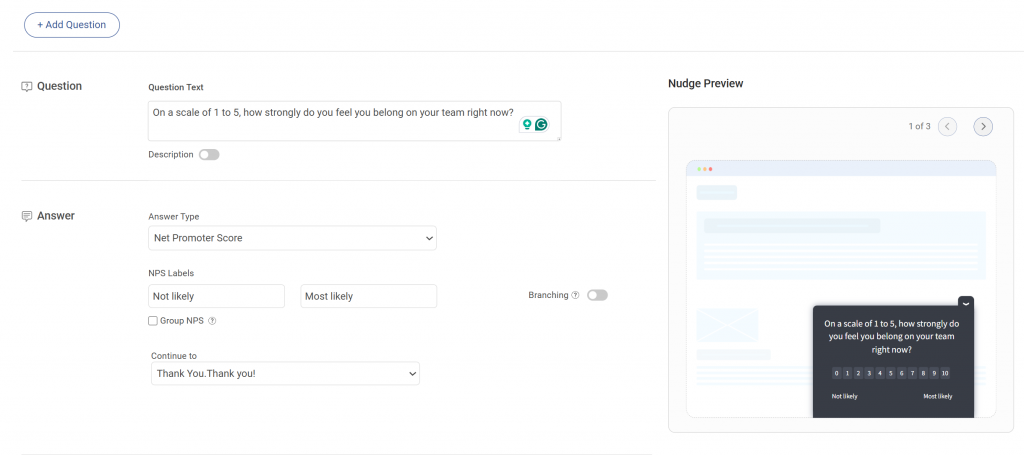

Step 4: Add Your Question and Choose the Answer Type

Enter your survey question and select the appropriate response format, such as multiple choice, rating scale, or text input.

You can also adjust additional settings, including:

- Randomizing answer order

- Adding an image to the question

- Marking a question as required

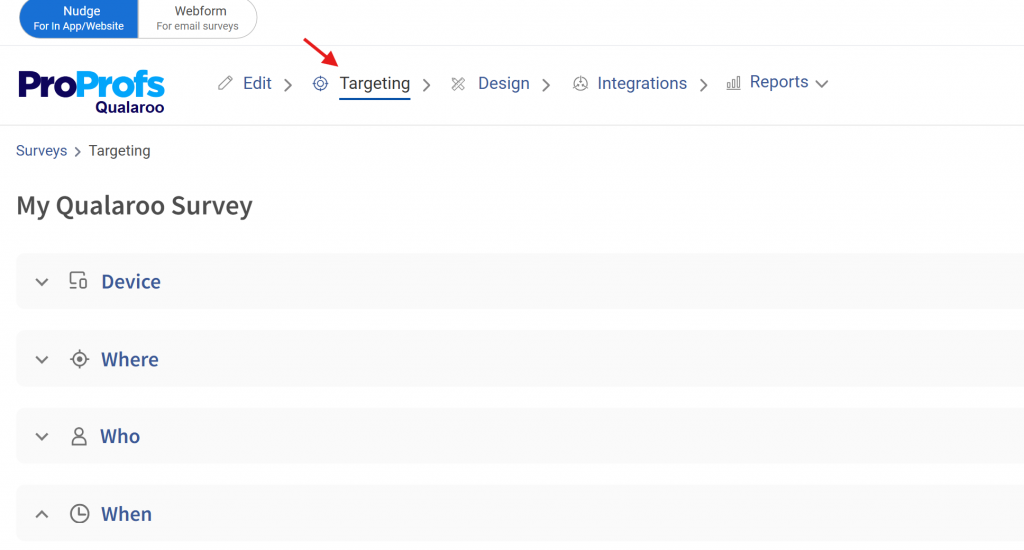

Step 5: Open the Targeting Tab to Set Triggers

Navigate to the Targeting tab to define when and how the survey appears. This is where you configure behavioral triggers and targeting conditions.

You can keep Qualaroo branding or upload your own logo to match your product interface.

A live preview appears on the right side of the editor, showing updates in real time as you customize the screener.

Quick Rule on Length: A survey longer than three questions on mobile loses most respondents before the final question. The instinct to ask more while you have someone’s attention almost always backfires. One focused question beats five scattered ones every time.

One Methodology Detail Worth Being Intentional About:

- Sample users across session cohorts, not just brand-new users or power users in isolation.

- Set a minimum session threshold (typically three or more) before triggering the survey.

- Limit surveys to once per session to protect the user experience.

This keeps your feedback grounded in real product usage rather than on first impressions alone.

What Are the Best Triggers for a Mobile App Survey?

Timing is where most mobile feedback programs either win or waste all their effort. A well-timed survey feels like a natural part of the experience. A poorly timed one feels like a pop-up ad. In my experience, getting the trigger right matters more than getting the question perfect.

Here is a practical trigger framework with example questions for each moment:

| Trigger | When to Fire | Example Question |

|---|---|---|

| After Task Completion | User finishes onboarding, checkout, or a setup flow | "How easy was that to complete?" |

| After Feature Use | First-time use of a specific feature | "Did this do what you expected?" |

| After Frustration Signals | Rapid taps, repeated backtracking, error screens | "Did something go wrong? Tell us." |

| After Session Milestones | 10th login, 30-day mark, first paid action | "What keeps you coming back?" |

| After Support Interaction | Ticket resolved, chat ended | "Was your issue fully resolved?" |

Never fire a survey in these situations:

- Right after a crash

- During a critical workflow, the user is actively trying to complete

- Before the user has had at least a few sessions to actually use the product

- More than once in the same session

“The single biggest improvement we made to our feedback program was stopping surveys during active tasks. Response quality went up immediately because users were no longer annoyed at being interrupted.”

This is what UX researchers report consistently. Context and timing are not optimizations. They are the core mechanism that makes in-app feedback work at all.

One Operational Detail Most Guides Skip: If your team needs to adjust triggers or test new survey logic without waiting for an App Store or Google Play review cycle, use feature flagging. It lets you roll back a problematic survey or try a new trigger in real time. For mobile teams running active feedback campaigns, this is not a technical luxury. It is a practical necessity.

What Questions Should You Include in a Mobile App Survey?

The right question depends entirely on what decision you are trying to make. I find it easiest to pull directly from a categorized bank rather than writing from scratch every time. Use what fits your current goal.

What Mobile Survey Questions Measure Satisfaction? (CSAT)

Use these after a specific interaction, feature update, or support touchpoint.

- How would you rate your experience with this feature today?

- Did the app perform the way you expected?

- How satisfied are you with how quickly this loaded?

- Was this interaction easy or difficult?

- How would you rate the overall quality of this experience?

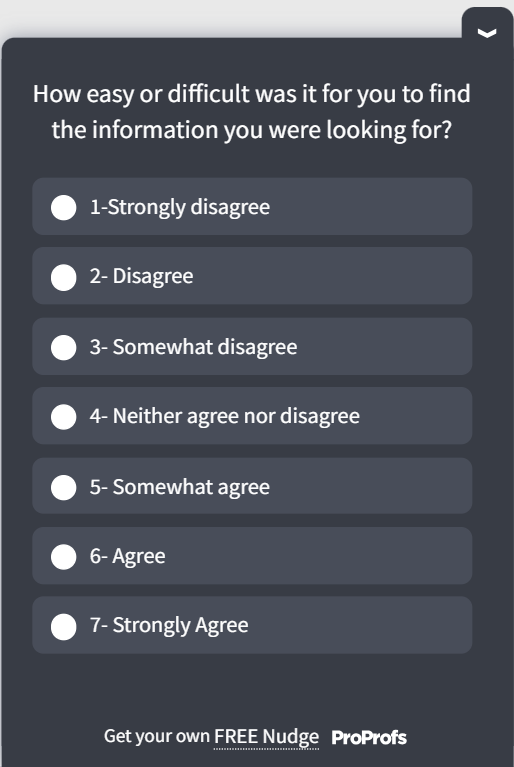

You can tweak and use this CSAT mobile app survey template:

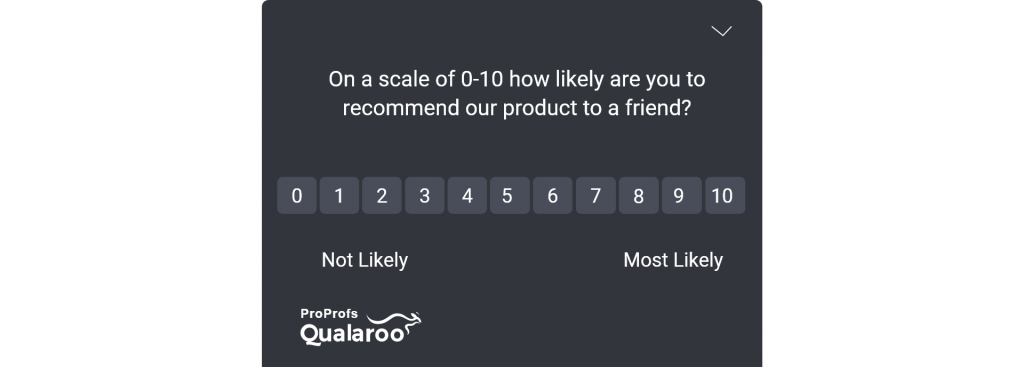

What Mobile App Survey Questions Measure Loyalty? (NPS)

Use these for quarterly pulse checks or after a significant product change.

- How likely are you to recommend this app to a friend or colleague? (0-10)

- What is the main reason for your score?

- What would make you more likely to recommend us?

- How does this app compare to what you used before?

You can tweak and use this NPS mobile app survey template:

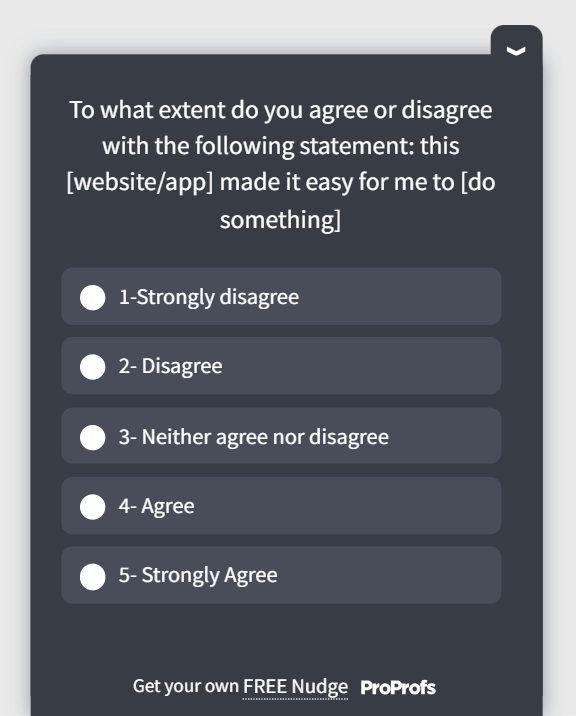

What Questions Measure Usability and Effort? (CES + UX)

Use these to find friction in specific workflows before it turns into churn.

- How easy was it to find what you were looking for?

- Was anything confusing or unclear during this step?

- Were the labels and buttons easy to understand?

- Did you experience any technical issues?

- On a scale of 1-5, how intuitive was this flow?

- How many steps did it take to complete what you came to do?

You can tweak and use this UES mobile app survey template:

What Questions Uncover Feature Gaps and Early Churn Signals?

Use these when you need product direction or want to catch at-risk users before they go quiet.

- Is there anything missing that would make this feature more useful?

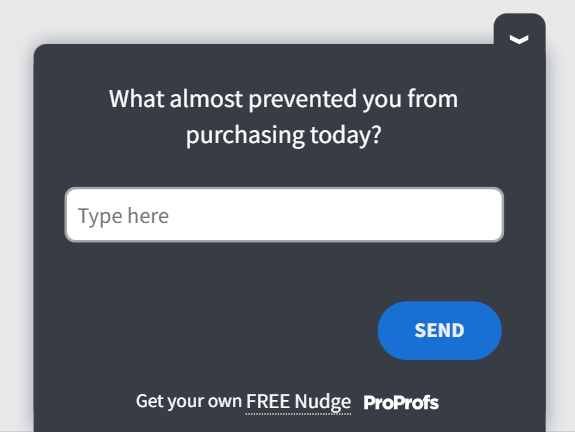

- What almost stopped you from completing this action today?

- How would you feel if you could no longer use this app?

- What is the one thing you wish this app could do?

- Is there anything that would make you stop using the app?

You can tweak and use this exit-intent mobile app survey template:

If Your App Is Used Out of Necessity: For banking, logistics, tax filing, or anything users open because they have to, loyalty questions are the wrong instrument.

You will get more useful signals from transaction success rate, error frequency, and time-to-complete a workflow.

Asking a banking user how likely they are to recommend the app tells you almost nothing. Asking whether their last transaction worked smoothly tells you everything.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

What Mobile Survey UX Principles Actually Protect Your Response Rate?

You can have the right question at exactly the right moment and still lose respondents if the survey itself is a pain to interact with. This is where a lot of teams quietly give back the response rate they worked to earn. These four principles are the ones I keep coming back to.

Here’s a quick video on how you can do that:

4. Clear Progress Indicators

If your survey has more than one question, users need to see how close they are to the end.

- Show “Question 1 of 2” or a simple progress bar for any multi-question survey

- Single-question surveys do not need one

- A five-question survey without a progress indicator will lose a meaningful share of respondents at question three

Design Is a Conversion Lever: A survey that looks and feels like your app earns trust. Trust earns responses. Responses earn product decisions you can actually defend. Treat your survey UX the same way you treat your product UX, because it is part of the product.

How Do Mobile App Surveys Recover the Revenue You Are Already Losing to Silent Churn?

Your analytics tell you where users drop off. What they cannot tell you is why. That gap is exactly where most retention programs stall out, and it is the part I see product teams struggle with most often.

When a user abandons onboarding at step three, your funnel data flags the exit. But a well-placed survey at that moment can ask a simple question like, “Was anything unclear here?” and reveal the real reason.

That single answer is often more valuable than the drop-off percentage itself because it tells you what to fix.

What This Looks Like in Practice

A good real-world example of this comes from Twilio.

After acquiring SendGrid, the team realized they were missing behavioral information about certain customer segments. Their existing systems showed product activity, but they lacked context about how customers were actually using features and what their intent was when visiting certain pages.

Getting that information through internal databases would have required developer time and backend changes that the team didn’t have available.

So instead of building new tracking infrastructure, they added targeted in-app surveys using Qualaroo.

These surveys appeared at specific moments inside the product and asked simple behavioral questions such as:

- How customers were using particular features

- Their intent when visiting a page

- Whether they were accessing the product from mobile or desktop

Because the surveys were triggered in context, the responses reflected what users were actually doing in the product at that moment.

What the Team Learned

The surveys quickly surfaced insights that analytics alone couldn’t provide:

- How different customer segments actually used Twilio products

- Why users visited certain pages

- How customers were accessing the platform across devices

This behavioral feedback helped the team fill critical data gaps without needing new engineering work.

The Result

With real-time feedback from targeted surveys, Twilio could:

- Make more informed product and marketing decisions

- Better understand customer intent

- Reach users outside their existing participant database

- Share clear insights with internal stakeholders using built-in analytics

Why This Matters

The important point here is not the tool. It’s the method.

Analytics showed Twilio what users were doing, and the surveys revealed why.

That difference is what turns raw behavioral data into something a product team can actually act on.

Without that context, teams often end up watching the same drop-off points week after week while their dashboards keep reporting the same numbers.

A short, well-timed survey closes that gap.

What Mobile App Survey Software Should You Use to Make This Work?

The mobile app survey tool you choose shapes how fast your feedback loop actually runs. Pick the wrong one, and you will spend more time managing the platform than acting on the data it collects. I have watched teams do exactly this, and it kills the program faster than any other mistake.

The wrong tool usually shows up in a few predictable ways:

- An SDK that inflates your app’s binary size, slowing down builds and deployments.

- A platform that requires a developer every time you want to adjust a targeting rule or launch a new survey.

- A tool that logs IP addresses by default, creating GDPR exposure that your legal team will not enjoy discovering later.

How Did I Evaluate Mobile Survey Software?

I run any mobile app survey platform through this checklist before committing:

| Evaluation Criteria | Good Answer | Red Flag |

|---|---|---|

| Modular SDK (tree-shaking) | Components are independently importable; unused code excluded → minimal bundle size | Single monolithic package; no mention of modular loading |

| No-code management | Non-technical teams can build, edit, and retarget surveys via dashboard | Requires developer help for routine changes (e.g., editing questions, triggers) |

| Advanced targeting | Native support for session count, login status, device, and geo | Limited to page/URL targeting; no behavioral or user-level conditions |

| Cross-channel surveys | iOS, Android, web, and email managed in one dashboard with unified reporting | Separate tools/accounts for channels or no native email support |

| Branching logic | Conditional follow-ups based on responses (e.g., Promoter vs Detractor) | Only basic skip logic or requires manual segmentation post-response |

| Anonymous + GDPR-ready | Anonymous mode + IP logging can be disabled out of the box | IP collection default with no self-serve control or buried in fine print |

| Integrations | Native integrations (e.g., analytics, Slack, BI) without middleware | Limited to Zapier or requires custom API work for key tools |

| Text analysis | AI clusters themes and sentiment automatically | Raw exports only; requires manual tagging or external tools |

On GDPR: If your app has any European users, confirm that your survey tool supports anonymous response collection with no IP address storage. Verify this in the technical documentation, not the marketing page. Some tools log IP addresses by default and require a support request to change that behavior. Find out before you are mid-campaign.

On SDK Weight: Every SDK you add hits your app’s binary size and build performance. Prefer modular SDKs where you load only what you use. Pair this with feature flagging so you can adjust or roll back surveys without waiting through an app store review cycle.

Why Do Product Teams & I Choose Qualaroo for Mobile App Surveys?

Qualaroo is built around the model mobile product teams actually need: a developer handles the SDK integration once, and from that point forward, researchers, product managers, and CX leads run every campaign themselves without touching code.

That matters because the biggest reason feedback programs stall out is not budget or intent. It is the dependency on engineering time for every single change. Qualaroo removes that bottleneck.

Here is what the platform delivers in practice:

1. Multi-Channel Deployment: Run surveys inside the app, via email, and on the web from one platform. No separate setups or duplicate reporting across surfaces.

2. Advanced Targeting: Segment by session count, login status, geography, device type, or any user attribute. Surveys reach the users who can actually answer the question meaningfully.

3. Branching Logic: NPS Promoters and Detractors automatically see different follow-up questions. No manual routing after the fact.

4. AI Sentiment Analysis: Open-text responses are automatically analyzed using Qualaroo’s native Natural Language Understanding engine. Responses are clustered into themes, and sentiment is scored as positive, neutral, or negative so your team can see the emotional tone across responses, not just the words. The Word Cloud feature visualizes the most frequently used terms from open-text answers.

5. GDPR-Compliant Configuration: Anonymous response collection with no IP logging is available out of the box, making it viable for European markets and privacy-sensitive products.

6. Integrations: Connects natively with Slack, Mixpanel, Power BI, and Google Analytics so feedback flows directly into the tools your team already uses to make decisions.

Qualaroo gives product teams a direct line from what users experience to what gets built next, without the setup overhead that makes most feedback programs stall after the first campaign.

How Do You Stop Losing Users to Problems You Have Not Found Yet?

If your feedback process still relies on email surveys or post-session forms, you are working with a lag. Problems surface weeks after they start costing you your users. By the time you see the pattern in your data, the damage is already done. I have seen this play out too many times for it to still feel surprising.

Mobile app surveys close that lag entirely. They surface friction, confusion, and unmet expectations at the exact moment of experience, while users are still in your product, and the insight is still actionable.

The math on fixing even one friction point compounds fast. One improvement to an onboarding step, one clarified label on a confusing screen, one reassuring line of copy before a permissions prompt.

Each change reduces churn, increases activation, and improves lifetime value for every user who ever hits that screen again.

You only find those moments by asking at the right time.

Start with one trigger on your highest drop-off screen. Write one question. Launch it through Qualaroo and see what your users are actually trying to tell you. That first answer will be worth more than months of guessing from analytics alone.

Frequently Asked Questions

How long should a mobile app survey be?

One to three questions is the practical ceiling on mobile. Single-question surveys perform best for transactional feedback. Save multi-question formats for post-session moments when users are less time-pressed.

What is the difference between CSAT, NPS, and CES in mobile surveys?

CSAT measures satisfaction with a specific interaction. NPS measures long-term loyalty and likelihood to recommend. CES measures how much effort a task requires. Use CSAT for feature feedback, NPS for pulse checks, and CES for finding friction in key workflows.

How do I make my mobile app survey GDPR compliant?

Use a tool that supports anonymous response collection with no IP address logging. Verify this in the documentation, not the marketing page. Some tools log IP addresses by default and require a support request to turn them off.

What is the difference between a mobile app survey and a web survey?

Mobile app surveys are embedded in native iOS or Android apps and triggered by in-app behavior. Web surveys run in browsers and use different trigger mechanisms like exit intent or time-on-page. Mobile surveys need SDK integration and should be designed specifically for small screens and one-handed use.

How do I analyze mobile app survey responses?

Track CSAT, NPS, and CES scores over time to build benchmarks. Use AI sentiment clustering to group open-text responses by theme. Segment by cohort to compare new vs. returning users, free vs. paid, or by device type.

How do I avoid annoying users with in-app surveys?

Cap surveys at once per session. Set a minimum session count before triggering. Never interrupt a critical workflow or show a survey right after an error. Use frequency caps across campaigns so the same user is not hit repeatedly in a short window.

Are there free tools for running mobile app surveys?

Some platforms offer limited free tiers. Qualaroo has a free plan, so you can run your first in-app campaign before committing to a paid tier. The more important question is whether the free tier includes SDK integration and behavioral targeting, because without both, mobile simply does not work properly.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

We'd love your feedback!

We'd love your feedback! Thanks for your feedback!

Thanks for your feedback!