Introduction to User Feedback

Whether you are developing a new product or have been selling the same one for years, you need user feedback.

User feedback is foundational to understanding and optimizing the user experience, which strongly impacts your relationship with customers. It includes the long-term viability as a company and your bottom line.

While collecting user feedback may not be new, the channels available to us where we can collect it have certainly expanded.

User research is no longer relegated to field observation or even research labs. Like so much of the rest of doing business, the collection of feedback has also expanded to digital channels.

Regardless of what kind of digital product you are looking for input about, mastering the art of feedback collection can help your team meet business goals, and ultimately appreciate your input as a team member.

"User feedback in customer-centric companies is the fuel that drives every internal working part. Every process and every business decision is powered by a deep understanding of who the user is, what it is they're needing to do and ensuring they can do it successfully."

That being said, there are many types of feedback to collect and even more tools you can use to go about collecting it. In this guide, we’ll lay out the importance of collecting feedback and give you guiding questions to help you determine your feedback strategy.

Answer these questions to tackle your collection of user feedback and make a real impact on your user experience and bottom line.

Why Collect Feedback?

Feedback is a crucial part of user experience research, without it, things can easily run amuck. Here are just a few of the ways collecting feedback can benefit you:

This is the most important benefit of collecting feedback. Although you can probably think of shiny, new things to add to your product or service all the time, you are not your user. To build something that is actually valuable for your audience, it is essential that you understand them.

Understanding your users at this stage of product development means having a sense of their needs, fears, limitations, motivations, and more.

All of this information should help you piece together an image of how they usually deal with the problem you want to solve for them. Of course, this requires speaking with your users and keeping what they actually value in mind.

"High-quality user research should inspire great designs. It gives us confidence that we're building (and have built) the right things at the right times and in the right way. It sets us on the right path while saving our engineering organization time and money."

Given how time-consuming and expensive developing a product or feature can be, the real benefit of user feedback is that it will save you time and money down the line as all development will be data-driven. Moreover, getting your product right makes for happy customers, which can significantly add to their lifetime value to your team.

No long-standing product has ever stayed exactly the same over the years. Real innovation comes from constant iteration. Whether that’s perfecting your packaging or finding the best way to describe your product, user insights are essential to that process.

You could argue that user feedback is more important in today’s world than it ever has been due to the speed of technological advancements. Every innovation that makes waves changes how we operate within our day-to-day lives.

How we compute information, how we manipulate objects, how we use software, and our expectations of product experience. UX itself has had to adapt to the new pace of technology, with adopted principles like Lean UX built on agile methodology and the assumption that a product will be continuously iterated on.

At this stage, you want to expand your understanding of your users beyond their motivations and needs to their behavior. Seek to understand how they use your product. What’s easy for them, and what’s not? This information will help you improve user flows and potentially even inspire delight!

One of the challenges of being in UX is figuring out how to make your case to the rest of your team. Advocating for the changes you’d like to see in a product becomes much easier when you have real data to back it up. When user insights are collected the right way, your case is made for you. It’s harder for other departments to disregard your input when it is based on actual data and feedback.

"When collected intentionally, rigorously and consistently, user feedback helps marketing teams deliver the right messages to activate the right audiences; helps product teams prioritize and build the right features and products; and helps sales teams build strategies that convert newly inspired users into loyal, paying customers."

- Marketing and sales will be thankful to have customer testimonials to turn into case studies, one of the top 3 most effective forms of marketing.

- Product will thank you for providing real direction to the features roadmap and ultimately saving the company time and money.

- Customer success will love that you are demonstrating an interest in and establishing a relationship with users.

- Leadership will appreciate the impact feedback has on the bottom line. Moreover, data-driven insights will improve their ability to make informed decisions quickly and from a high level.

- Developers will finally understand why they’re doing what they’re doing. Drift has cited this exact technique, customer-driven methodology, as part of the reason for its success.

Who should I collect feedback from?

One of the questions we often get is, “Who should I collect feedback from?” Believe it or not, this question can completely trip up teams who are otherwise on track to gather useful user insights.

Teams can get paralyzed and say to themselves, “but I don’t have access to the ideal user group to gather feedback from.” The real secret is that you don’t have to have the perfect or even the typical user to gather meaningful insights.

“if finding the ideal users means you’re going to do less testing, I recommend a different approach: ‘recruit loosely and grade on a curve.’ In other words, try to find users who reflect your audience, but don’t get hung up about it. Instead, loosen up your requirements and then make allowances for the differences between your participants and your audience.”

In fact, Krug even goes on to say that there are a few valid reasons for intentionally testing with users who probably wouldn’t use your product:

- Experts are also trying to figure things out like the rest of us, they just operate on a higher level.

- It’s better to err on the side of things being more clear than less clear. The odds that anyone will ever complain that your product is too easy to understand are slim to none.

- Even if you think your target audience will be able to use your product, it’s not a good idea to make something only they can understand. Remember this when you feel inclined to require domain-specific knowledge or industry jargon to navigate your product.

"Sometimes users will say misleading things, usually out of fear of saying "the wrong thing." But if you get participants involved in the design process, they then have a sense of ownership. With a sense of ownership comes the confidence to speak more freely and truthfully, and therefore gather more accurate feedback."

When recruiting participants for user feedback, keep in mind how much money you are asking your company to spend or save. Generally speaking, most organizations would appreciate a concerted effort to save money on recruiting research participants.

While for some types of research, you certainly will need a specific, skilled group of users, this is not true for all studies. Where possible, make allowances for a more accessible group of user participants, allowing you to expand your participants and conduct research more quickly.

This, however, is not to say that you should shun your ideal user. As possible, recruit users who fit your target profile or who would be able to identify any potential pain points or deliver insights you might not otherwise discover.

The more specifically you target the right users in the right use case, the more accurate your feedback will generally be. Of course, targeting participants very specifically can be costly and time-intensive. Keep a close eye on the trade-off in time and energy that comes with finding the “exact” right users.

It’s important to think about the user’s relationship with your product or service as well as their demographics when choosing participants.

If you are working on a redesign or creating new features for a product, you’ll likely get the most benefit out of chatting with existing customers or people who at least have some familiarity with your product or service. On the other hand, if you are designing something brand new, you will want to test it with ideal users as possible.

What should I collect feedback about?

There are so many different types of feedback. Align your pursuit of feedback to your goals. Do you want to improve your product experience? Make your customers more happy? Do you want to check the overall experience or investigate the specific part of your product?

Do you want to find out what your product people like and why or do you want to find out what makes it difficult for them to complete their actions? Do you want to ask about the features or investigate whether the flow of specific use cases is smooth? Do you want to check whether the product is usable or rather useful? The goal of your feedback will determine what type of questions you should ask.

Net Promoter Score is one of the most common types of feedback. It consists of a simple question: how likely are you to recommend our product to a friend? While the question is simple, it can derive some interesting insights.

People think of things they recommend as an extension of their brand or reputation. If someone recommends a particular product or service, they have to be comfortable with others associating them with it. NPS is essentially a customer satisfaction metric that asks users: are you willing to let others associate this product/company with you?

As much as this information is useful and an industry-standard, NPS isn’t the most sophisticated form of feedback because, without context, the score isn’t very useful. NPS certainly has its place, but we don’t recommend you stop there.

Asking users to identify roadblocks is another common use of feedback. The goal of identifying roadblocks is, of course, to improve the product experience. Depending on the product, this can have major impacts.

Whether you’re making it faster and easier to book a doctor’s appointment, upload documents, generate reports, register domains, set up chat sequences, send messages, build wireframes, etc. Identifying roadblocks is an important practice that can have major effects. There are a few different ways to phrase these questions.

- What prevented you from making a purchase today?

- What other information would you like to see on this page?

- What can I help you accomplish today?

- What, if anything, surprised you while completing this task?

- What was difficult for you, if anything, about using our product today?

If you are looking to improve your conversion rate, ask what other competitors your users or site visitors considered. From there, you can ask follow-up questions about why they considered other vendors. This can reveal insights about your audience, like price sensitivity, deal-breakers, and more.

What type of feedback is helpful may also depend on what phase of the UX process you are in. For example, if you are working on developing a product from scratch, it may resemble a discovery phase rather than verifying specific ideas.

Namely, you are trying to learn mental models related to what you are designing. At the same time, you should explore opinions, beliefs, attitudes, and fears around the subject. This is also essential information for marketing and sales in order to message and promote the product. However, it is arguably the most important for UX professionals because it can truly change the direction of a SaaS product.

On the other hand, if you are working on an existing product and making changes to it, you should tweak your questions accordingly. You are looking for specific ways to improve your product or website but also want to learn about your audience’s relationship with competitors.

At this phase, push to discover why users make certain decisions by seeking in-depth insights about how they use your product. You want to know what strengths to capitalize on and what blind spots you need to address. You’ll want to implement A/B testing at this phase of design as well.

Recommended Read - Best A/B Testing tools.

"At Redfin we have an insatiable curiosity for our users and a desire to have the best user experience in our field. We do this by establishing both business metrics and user experience metrics for every feature we build. We ask questions that help us identify, track and improve our user experience metrics."

Other information that will also be helpful in collecting benchmarks, and one way to do that is from your users. Once you start testing with users, they will often give you examples of what your product or service reminds them of.

This information is crucial! Keep track of it as it will begin to paint a picture for you of how your users understand your product as well as what value they see in it. Other areas that you should continuously gather feedback on are mockups, A/B testing, and the existing product.

We’ve summarized some potential questions to ask for feedback at four specific stages: before you start building the product, the prototyping stage (gathering feedback from mockups), A/B testing, and continuous feedback collection from the existing product.

To help contextualize the following examples, we’ll be using a specific example of a digital product, a fictional e-learning platform called EScolere.

- What do you think about studying online?

-

What in your opinion are the pros and cons of learning online?

- Have you ever participated in any online learning? If yes, what type of online learning did you participate in?

- What was your experience like?

- What topic did you study online?

- Do you think there are domains/topics that would be difficult to learn online?

Note that there may be very similar questions in the prototyping stage as the existing product stage. However, you’ll want to ensure to keep questions at this stage specific to only the aspects of the product or experience you are presenting.

- Where would you click first?

- What challenges did you encounter during the lesson?

- Did anything about the platform surprise you?

- What do you think about the layout of the class?

- What do you think about the navigation? Were you able to find your e-classroom easily?

A/B testing is used to see if changes to things like layout, messaging, etc. will make a difference in any metrics. Ask the same questions for different versions of what you are testing.

For this example, we are testing two different layouts for E-Scolere lessons.

- How easily were you able to navigate to the lesson?

- Did you have any difficulty streaming the lesson?

- Is there anything you would like to see in the lesson layout?

- Is there anything you liked about the lesson layout? If so, what?

Once the product is up and running it’s best practice to constantly collect feedback. As your user’s needs, habits, and ways of using digital products change, your product should be changing with them.

- How difficult was this product to use?

- Did you encounter any challenges when enrolling in the Data Science course?

- I noticed you didn’t use the commenting tool in your classroom. Why is that?

- Rate your experience using E-Scolere?

- How likely are you to take a course on another subject with E-Scolere?

- What subjects are you interested in learning more about online?

- How likely are you to recommend E-Scolere to a friend?

When should I collect feedback?

The question of when to collect feedback has a number of implications. There are a couple of angles from which to consider this question: in relation to the product life-cycle and in relation to the user journey.

In terms of the product life-cycle, we recommend asking during the ideation phase (discovery phase before you start building your product), prototype phase (based on mockups so you can improve them), validation phase to verify design ideas and A/B test, and finally at the iteration phase to continuously keep the product usable and delightful.

Trigger behaviors are one of the best ways to decide where to ask for feedback. Here are some classic examples of triggers that can warrant questions:

- Time spent on a particular step or page could signify confusion or engagement, depending on the type of content presented. Ask something like, “Did you find everything you were looking for on this page?” or “What drew your attention?” or “Did anything surprise you?” This will give you a sense of how engaged this particular visitor is with your site.

- If a user has returned to your site after abandoning a shopping cart you’ll definitely want to ask them questions as they appear to be a high-intent visitor. A question like “Why did you choose not to purchase [item from cart]?” can elicit some interesting insights. Alternatively, you can ask what competitors they are considering or previously have considered.

- Exit intent is another common trigger. These types of questions are triggered by actions that suggest a visitor is about to leave your website, such as scrolling toward the exit button. These questions can range from asking users about their satisfaction levels to asking if they need any help figuring out how to take the next step toward reaching a conversion goal.

Ask the Right Question at the Right Time

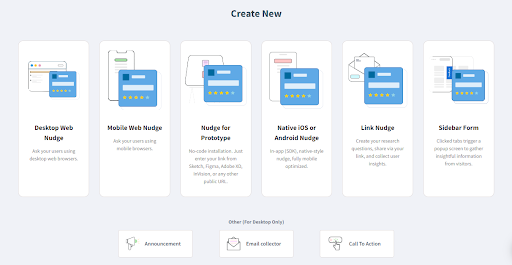

Example of some survey targeting options of Qualaroo

- Domains

- Subdomains

- Regex matching

- Identities

- All or a portion of audience

- Geography

- And More!

- Duration on page

- Exit intent

- Scroll location

- Display once per visitor

- Until response is received

- Recurring questions

- Custom date range

- Target number of responses

- Manual activation and deactivation

- Find a natural point during their user journey to ask for feedback that doesn’t block essential actions.

- For example, you don’t want to block a high-intent user from converting or purchasing by prompting a user for feedback on the shopping cart page. This also applies to other conversion actions, like newsletter signups. Making this mistake will impede business goals, and your team won’t be very happy with you!

- You also don’t want to ask for feedback from users who have just arrived on your site for the first time. At this stage, you won’t have much information about them to begin with, and asking a question too early can be disruptive.

Where should I collect feedback?

Another common factor to consider is where to collect feedback. In most companies, there are a number of possible channels where feedback can be collected.

Our primary recommendation for this question is to keep in mind that different channels serve different purposes and of course, target different types of users. Consider the context of the feedback you are looking for.

Of course, if it is channel-specific, then you know where to begin collecting that feedback. If it is not channel-specific, it is important to keep track of this information and target the right users wherever it makes the most sense.

Here are some potential channels and things to consider related to collecting feedback on them:

If your primary channel is via the app, this is where you will want to focus your feedback collection efforts! Alternatively, if your app is auxiliary to your product offering, and you want to increase engagement or downloads, you’ll want to learn from those users who do make use of your app. Keep in mind that this is something that typically takes place behind the login wall.

This is probably the most useful feedback because it comes from your audience, the people who are already engaged with your product, as opposed to just loosely interested. Response rates will be higher, and responses themselves should be more substantial.

"In-app feedback collection is my preferred channel. With in-app feedback, the user is not required to go out of their way to provide insight."

On-site feedback collection is the most common kind we see. This channel is recommended for understanding barriers to purchasing (think exit intent and abandoned shopping carts), and general information about your users. It may be a good idea to compare answers from your site to in-app answers to the same questions. For example, if you ask both in-app and on-site visitors about their job titles, you can compare to see if your web visitors actually match the demographics of your users. This may lead to some marketing insights.

Consider the context in which your users interact with your site. Are they typically on the move when accessing their account or researching your product? If so, you will want to capitalize on this by collecting feedback via your mobile site.

You may also consider deploying your questions via email. This is a good channel for contacting leads who have not converted or current customers who may not spend a lot of time on your site or in your app. Feedback collection via email typically involves following an external link and happens outside of the email client.

However, we don’t necessarily recommend a strong focus on email surveys, as most people leave the majority of messages they receive unopened. Consider this when analyzing the stakes of your questions, as email-distributed surveys are easily ignored.

If you are in the pre-product deployment phase, consider getting feedback from potential users within your mockup tool. For example, InVision has a commenting tool that can make collecting and centralizing qualitative feedback from user testers or other team members (for internal feedback loops) very simple.

When choosing feedback channels (and really all aspects of your feedback program), we like this straightforward rule of thumb from Walter Hannemann, a Product Manager at Dualog: “The less effort required from the user, the more likely it is that they will provide feedback.” We recommend meeting your users where they are for the best results.

How should I collect feedback?

While we’ve covered many of the basics of collecting feedback so far, the truth is there are a lot of ways to get feedback wrong. We’ve put together a list of best practices to help you avoid these (easy to fall into) traps.

Once you know what you need feedback about, think of the questions that would help you investigate the subject. Try to only ask questions that will provide useful answers and help you make impactful decisions. Keep in mind that some questions may serve a different purpose, like engagement, to smooth out the process.

One of the biggest temptations when it comes to feedback collection is wanting to know the answers to ALL the questions you have from a million different angles. While this may be the ideal for insight experts everywhere, the truth is that it is not only unrealistic, but also a major annoyance to users.

"My tip: I recommend having your front-end developer with you when conducting a feedback interview. It’s important for them to hear this feedback firsthand! This will make the process faster overall, and make sure everyone is on the same page."

Both vocabulary and syntax should be easy to understand while clearly communicating the subject and intention of the question. If you will be collecting feedback from a very specific group, use their language (jargon, idioms, etc.) within reason.

On the other hand, if your audience is broad and diverse, you’ll also want to adjust accordingly. In that case, the language used should be simple enough to be understood by people without domain-specific knowledge and without losing the ability to glean useful insights.

Make sure you ask questions your users can actually answer. Test your own assumptions about your user’s level of knowledge. If there is still a risk some of the users may not be able to answer, try to phrase the question in a way that not understanding it won’t make the user feel discredited. If users feel they should be able to answer the question, they may pretend to have the necessary knowledge and skew your results. Be aware of what you assume.

"Be hyper-conscious of the language and non-verbal cues you are using. Word choice, body language, and every little form of expression can influence a respondent. If you have the budget for it, be as “invisible” as possible."

Questions shouldn’t put users in a position where they may need to provide an answer with social implications. When possible, anonymous research is preferred for this reason. If you want an honest answer, make them feel that all the answers are acceptable - not only from your perspective but in light of applicable social norms.

You should compose questions in a way that they don’t suggest any particular answer. We can identify a couple of pitfalls of leading questions:

- Avoid making it easier to answer in a particular way. For example, a leading question would be: “Do you support international space programs?” A non-leading question would be: “What is your opinion about international space programs?”

- Another aspect of this issue is including positive or negative implications by using words that have an emotional charge. If you ask a question like, “what did you love about your experience today?” then you are assuming that your users were delighted with their experience.

- Finally, avoid linking possible answers with something desirable (or undesirable). For example, the question “Are you for or against increasing the product price in order to provide better data security?” implies that you should be increasing the product price.

In some cases, emotionally charged questions are actually helpful. For example, you may want to ask one after a series of emotionally neutral questions to identify the edge opinions.

Double-barreled questions, or compound questions, are questions that ask about two topics but only allow for one answer. While guiding users to provide actionable insights is important, you also don’t want to influence or confuse their answers. If you ask about two things in one question, you cannot determine precisely what the user was referring to when answering the question, and you don’t want to assume the answer applies to both questions.

How long did it take you to complete the training module, and on what day of the week did you do it?

How long did it take you to complete the training module?

What day of the week did you do it?

Over the past six months, how would you rate our customer service and response time?

Over the past six months, how would you rate our response time?

Over the past six months, how would you rate our customer service?

How did you like our Help Centre information and our customer support?

How useful did you find our Help Centre information?

How did you like our customer support? Rate on a scale of 1 to 10.

The order of your questions should make it easier for the user to answer questions and move from one to the next with ease. This should feel natural to your users. It’s best to start with general questions and then move to more specific questions, narrowing down the aspect you are researching.

Open-ended questions let users answer in their own words, forming their response the way they want and deciding how much information to give.

Open-ended questions allow you to collect information that may not be possible to collect otherwise. These questions may to some extent eliminate the risk of the user not having enough knowledge about the subject - they share what they want in their own words. Open-ended questions are more conversational and therefore natural to answer. However, they require more effort from the user.

Closed-ended questions, on the other hand, shouldn’t be used when speaking about general problems that are complex and when users may have different opinions or experiences with different aspects of the matter. These questions will make data analysis much easier!

With closed-ended questions, the answers are included in the question and the user is asked to choose which is closest to their opinion or experience. The answers to closed-ended questions may vary. These types are as follows:

- Yes or No.

- Radio select with more complex answers with usually 3-5 answers to choose from.

- Likert scale - typically used to determine the degree of acceptance of an opinion of phenomena; or agreement with a statement; or intensity of feelings. Typically there are 5 to 7 degrees from very negative to very positive.

While free-form responses can elicit interesting insights, it will be easier to understand how the majority of your audience feels if you offer them defined, standardized answer choices. While this may not be possible for every question, it will make your life easier when you can implement it. Closed-ended questions should be used i f there is only one frame of reference common for all the respondents and there is no room for other interpretations.

Don’t distract your users with a flashy design for on-site or app services. While you may think that it will stand out to users, it can also impede the usability of your product. Make sure that your question or pop-up matches your branding and design for a seamless experience.

If you are a multinational company or otherwise have global customers, keep in mind that language matters. You will want to make sure the phrasing of feedback collection translates to your audience and their primary or native language. Consider that nuances are important here, and it can make all the difference.

To finish off our best practices, we're highlighting some tips from one of our contributors, Noah Shrader of Lightstream. Noah shared a checklist of questions he uses to ensure he selects only the best questions for delivering user feedback.

Regardless of medium, I've found the best process for choosing questions is to write down 1-3 things you're ultimately wanting to learn (your research goals). From there, brainstorm as many questions as possible (could be general or specific). Then, begin editing. Here's a list of things I ask myself when editing down my list of potential questions.

- Is what you're asking clear?

- Is your question focused/on-topic?

- Is it contextually appropriate?

- Could any questions be combined for a stronger ask?

- Do the questions follow a logical sequence?

- Are any of the questions leading or otherwise able to compromise the validity of responses?

- And most importantly, does it tie directly back to what you're trying to learn?

Users have diverse preferences when it comes to communication. By offering multiple channels for feedback, you make it convenient for users to share their thoughts in a way that suits them.

For example, some users may prefer sending an email, while others might be more comfortable using in-app forms or social media platforms. Online survey tools like Qualaroo allow you to collect feedback from various channels from a broader spectrum of your user base.

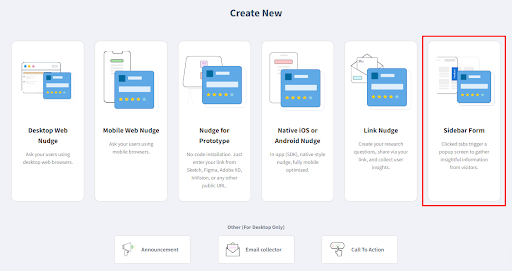

In-app feedback tools are mechanisms embedded directly within your application or website. These tools can take the form of pop-up surveys, feedback buttons, or contextual forms.

The advantage is that users can provide feedback without leaving the application, making the process seamless and increasing the likelihood of user participation. This real-time feedback can be invaluable in understanding user experiences during specific interactions.

Also read: 15 Best Mobile In-App Feedback Tools in 2024

Customer support interactions present an opportune moment to gather feedback. When users reach out for assistance, it's an indication that they are actively engaged with your product.

Using this opportunity to ask for feedback, either within your chat window or post-support surveys, allows you to capture insights related to their recent experiences and satisfaction levels. It's a natural extension of the user support process.

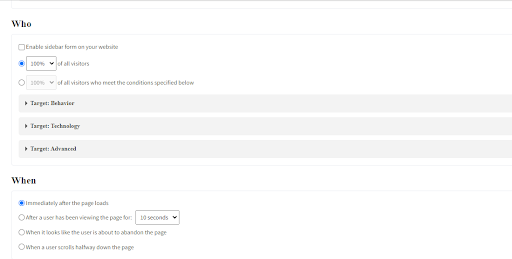

Feedback widgets or pop-ups strategically appear at specific points within your product or website. These can be triggered by user actions, such as completing a task or spending a certain amount of time on a page.

By prompting users at these moments, you capture spontaneous feedback related to their current experiences. These widgets can be designed to be unobtrusive while still encouraging user engagement.

User interviews involve direct conversations with users to understand their perspectives in-depth. This qualitative approach allows you to explore the reasons behind feedback, uncover user motivations, and gain insights into the emotional aspects of their experiences.

User interviews can provide nuanced information that might be missed through quantitative methods, offering a more comprehensive understanding of user needs and preferences.

A/B testing involves comparing two versions (A and B) of a product or feature to see which performs better. By analyzing user behavior and preferences through this method, you gain quantitative insights into what resonates with users.

This data-driven approach helps you make informed decisions about product improvements and optimizations based on actual user interactions.

Collecting feedback from social media platforms allows users to share their experiences, both positive and negative. By monitoring social media, you can tap into unsolicited feedback.

This provides a real-time pulse of user sentiments, helping you identify emerging trends, concerns, or areas where users are particularly satisfied. It's a valuable source of unfiltered insights directly from the user community.

User communities are spaces where users interact with each other. By actively participating in or creating such communities, you can observe discussions, answer questions, and directly engage with users.

Doing so provides a platform for users to share feedback, help each other, and express their opinions. It's a more organic way to stay connected with your user base.

Analytics tools, such as Google Analytics and Adobe Analytics, provide quantitative data on how users interact with your product. This includes metrics like page views, click-through rates, and user journeys.

Analyzing this data helps you identify patterns and trends, giving you insights into user behavior. It's a crucial component of understanding the quantitative aspects of user experiences.

Timing is crucial when it comes to feedback surveys. Sending surveys at specific times, such as after a significant product update or feature release, ensures that feedback is relevant to recent user experiences. Time-sensitive surveys capture insights when they are most likely to be accurate and reflective of the user's current interaction with your product.

With Qualaroo’s conditional logic, you can trigger your surveys at the right moment and target specific customer segments to capture nuanced insights.

Allowing users to provide feedback anonymously encourages honesty. Some users may hesitate to share their true opinions if they fear repercussions. Anonymity creates a safe space for users to express their thoughts freely, leading to more candid and valuable feedback.

Qualaroo collects all user responses as anonymous by default, so you don’t have to perform any special configurations.

Here’s a related read: 10 Best Anonymous Feedback Tools to Collect Unbiased Insights

Implementing a reward and loyalty system incentivizes users to provide feedback. This could be in the form of giveaways, discounts, or exclusive access to new features. By acknowledging and appreciating user input, you not only encourage feedback but also show that you value and recognize the time and effort users invest in providing their thoughts.

Throughout the guide, we’ve left no stone unturned about user feedback, from what it is why it's important to how and when to collect these remarkable insights.

If you want to collect reliable user insights, here’s a checklist that you can refer to:

- Identify who to get feedback from.

- Set your objectives to collect feedback about the stuff that matters.

- Choose the right time to ask for feedback.

- Pick the right platforms to send your surveys for maximum responses.

- Follow the best practices and strategies to ensure your surveys fetch reliable data.

Besides following these steps, there’s another crucial thing you shouldn’t skip: An all-in-one online survey tool that allows you to conduct diverse surveys on different platforms, helps you zero in on your target audience, and shares actionable analytics reports in real-time.

Frequently Asked Questions

A contextual inquiry involves observing users in their natural environment to understand how they interact with a product or service. For instance, studying how individuals use a mobile banking app at home can reveal valuable insights.

Contextual Inquiry and Contextual Interview are related but not identical. Both involve studying users in their context, but the interview focuses on direct questioning, while the inquiry emphasizes observation and interaction.

The 5 contextual factors are physical, social, organizational, temporal, and informational elements that influence a user's experience with a product or service.

Contextual characteristics refer to the specific traits or features within the user's environment, such as the physical setting, cultural influences, time constraints, and available information.

Do you want to improve conversions?

Use Qualaroo surveys to gather insights and improve messaging.