We’re heading into the holiday season of 2023, and still, the words that Philip Kotler said decades ago ring truer than ever: “customer is king.”

With the approval of your kings (and queens), your product - app, website, software, or any other offering to them - is bound to do well in the market. Which brings us to the question:

How can you tell what goes on in your customer’s minds?

Put another way, is your product going to help your target segment overcome their challenges?

Before you go all-in and launch, ensure that you’ve made a sure bet - with usability testing.

This guide answers ‘what is usability testing’ and delves into some methods, questions, and ways to analyze the data.

TL;DR tip: Scroll to the end for a convenient list of FAQs about usability testing.

- Usability Testing - What Is It?

- How You Benefit From Usability Testing

- Common Usability Testing Methods

- Remote [Moderated/Unmoderated]

- Benchmark Comparison

- Open-Ended [Exploratory]

- Contextual [Informed users]

- Guerilla [Random public]

- What Questions to Ask During Usability Testing

- Qualaroo Templates for Usability Testing

- Best Practices To Follow for Usability Testing

- Mistakes To Avoid in Usability Testing

- FAQs

What is Usability Testing?

Usability testing answers your questions about any and all parts of your product’s design. From the moment users land on your product page to the time they show exit intent, you can get feedback about user experience.

Using this feedback, you can create products from perfect prototypes that delight your customers and improve conversion rates. This also works out well if you want to improve your product that is already in the market.

Types of Usability Testing

Usability testing is essential because it makes your website or product easier to use. With so much competition out there, a user-friendly experience can be the difference between converting the visitors to customers or making them bounce off to your competitors.

For example, the bounce rate on a web page that loads slower than 3 seconds can jump up to 38%, meaning more people leaving the site without converting.

In the same way, a product with a complicated information architecture will add to the learning curve for the users. It may confuse them enough to abandon it and move to a more valuable product.

When you’re told by unbiased testers or users that your product “feels like it’s missing something,” but they can’t tell you what “something” is, isn’t it frustrating? It’s a pity you can’t turn into Sherlock Holmes and ‘deduce’ what they mean by that vague statement.

Philip Kotler even quoted the author of Sherlock Holmes, Sir Arthur Conan Doyle: “It is a capital mistake to theorize before one has data.” in his book Kotler on Marketing: How to Create, Win, and Dominate Markets.

How cool would it be to turn the subjective, vague survey responses into objective, undeniable data? This data can be the source of quite ‘elementary’ as well as pretty deep insights that you’ve been seeking.

That’s where usability testing comes in.

It puts the users in the driver's seat to guide you to all the points which can deter them from having a good experience.

Be it a prototype or working product, you can use usability tests at any stage to streamline the flows and other elements.

Benefits of Usability Testing

It’s time to see what benefits usability testing can bring to your product development cycle. Irrespective of the stage at which your product is, you can run usability testing to pinpoint critical issues and optimize it to improve user experience.

1. Identify Issues With Process Flows

There are so many processes & flows on your website or product, from creating an account to checking out. How would you know if they are easy to use and understand or not?

For example, a two-step checkout process may seem like a better optimization strategy than a three-step process. You can also use A/B testing to see which one brings more conversion.

But the question is, which is more straightforward to understand? Even though a two-step checkout involves fewer steps than 3 step checkout, it may complicate the checkout process more, leaving first-time shoppers confused.

That is where usability testing helps to find the answer. It helps to simplify the processes for your users.

Case Study- How McDonald's improved its mobile ordering process

In 2016, McDonald's rolled out their mobile app and wanted to optimize it for usability. They ran the first usability session with 15 participants and at the same time collected survey feedback from 150 users after they had used the app.

It allowed them to add more flexibility to the ordering process by introducing new collection methods. They also added the feature which allowed users to place the order from wherever they want. The customers could then collect the food upon arriving at the restaurant. It improved customer convenience and avoided congestion at the restaurants.

Following the success of their first usability test iteration, they ran successive usability tests before releasing the app nationwide.

They wanted to test the end-to-end process for all collection methods to understand the app usability, measure customer satisfaction, identify areas of improvement, and check how intuitive the app was.

They ran 20 usability tests for all end-to-end processes and surveyed 225 first-time app users.

With the help of the findings, the app received a major revamp to optimize the User Interface or UI elements, such as more noticeable CTAs. The testing also uncovered new collection methods which were added to the app.

2. Optimize Your Prototype

Just like in the case of McDonald's in the previous point, usability testing can help you evaluate whether your prototype or proof of concept meets the user's expectations or not.

It is an important step during the early development stages to improve the flows, information architecture, and other elements before you finalize the product design and start working on it.

It saves you from the painstaking effort of tearing down what you've built because it does not agree with your users.

Case Study - SoundCloud used testing to build an optimized mobile app for users

When SoundCloud moved its focus from desktop to mobile app, it wanted to build a user-friendly experience for the app users and at the same time explore monetization options. With increased demand on the development schedule because of the switch from website to mobile, the SoundCloud development team decided to use usability testing to discover issues and maintain a continuous development cycle.

The first remote usability test iteration involving 150 testers from 22 countries found over 150 bugs that affected real users. It also allowed the team to scale up the testing to include participants from around the world.

Soundcloud now follows an extensive testing culture to release smoother updates with fewer bugs. It helps them to add new features with better mobile compatibility to streamline their revenue models.

Bonus Read: Step by Step: Testing Your Prototype

3. Evaluate Product Expectations

With usability, it is possible to map whether the product performs as intended in the actual users' environment. You can test whether the product is easy to use or not. The question that testing answers is - Does it have all the features to help the user complete their goal in their preferred conditions?

Case Study - Udemy maps expectations and behavior of mobile vs. desktop app users

Udemy, an online education platform, always follows a user-centric approach and learns from its users' feedback. It has helped them to put customer insights into the product roadmap.

The team wanted to understand the behavioral differences between users from different platforms such as desktop and mobile to map their expectations.

So, they decided to create a diary study using remote unmoderated testing as it would allow participants from different user segments and provide rich insights into the product.

They asked the participants to use a mobile camera to show what they were doing while using the app to study their behavior and the environment.

The data from the studies were centralized into one place for the teams to take notes of the issues and problems that people faced while using the Udemy platform.

With remote testing, the Udemy team got insights into how students used the app in their environment, whether on mobile or desktop. For example, the initial assumption that people used the mobile app on the go was proven wrong. Instead, the users were stationary even when they were on the Udemy mobile app.

It also shed new light on the behavior of mobile users helping the team to use the insights into future product and feature planning.

Bonus Read: Product Feedback Survey Questions & Examples

4. Optimizing Your Product or Website

With every usability test, you can find major and minor issues that hinder the user experience and fix them to optimize your website or product.

Case Study - Satchel uses usability testing to optimize their website for conversions

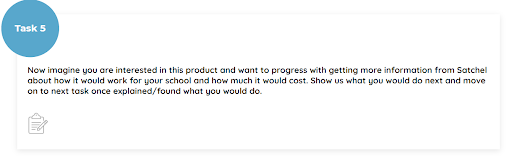

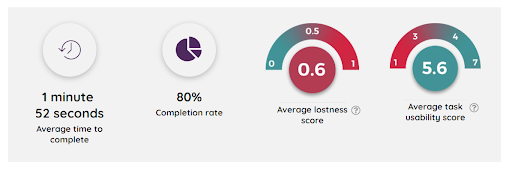

Satchel, an online learning platform, wanted to test the usability of their website to use the inputs into their conversion rate optimization process. The test recruited 5 participants to perform specific tasks and answer the questions to help the team review the functionality and usability of user journeys.

The finding revealed one major usability issue with the website flow of one process in which users were asked to fetch the pricing information.

It indicated a high lostness score (0.6), which is the ratio of optimal to the actual number of steps taken to complete a task. It means that users were getting lost while completing the assignment or were taking longer than expected to complete it. Some participants even got frustrated, which meant churned customers in the case of real users.

Using the insights, the team decided to test a new design by adding the pricing and 'booking the demo' link into the navigation menu.

The results showed a 34% increase in the 'book a demo' requests validating their hypothesis.

The example clearly shows how different tests can be used to develop a working hypothesis and test it out with statistical confidence.

Bonus Read: Website Optimization Guide: Strategies, Tips & Tools

5. Improve User Experience

In all the studies discussed above, the ultimate aim of usability testing was to improve user experience. Because if the users find your website or product interactive and easy to use, they are more likely to convert into customers.

Case study - Autotrader.com improves user experience with live interviews

Autotrader.com, an online marketplace for car buyers and sellers, was looking to improve users' car buying experience on the website. To understand user behavior and map their journey, the team used live conversations to connect with recent customers.

Remote interviews made it possible to test users from across the country to understand how the behavior changed with location, geography, demographics, and other socioeconomic factors. It helped the testing team to connect with users across different segments to compare their journeys.

They discovered one shared experience - both new and experienced customers found the process of finding a new car very exhausting.

“Live Conversation allows me to do journey mapping type interviews and persona type work that I couldn’t do before because of staff and budget constraints— bringing these insights into the company faster and at a much lower cost.”

Bradley Miller, Senior User Experience Researcher at Autotrader

Live interviews also helped uncover new insights about the consumer shopping process. The team found out that most consumers started their car buying journey from search engine queries.

The users were not looking for a third-party car website as thought earlier. It meant they could land on any page on the website. It became clear that the landing pages needed to be revamped to provide a more customer-centric experience to the visitors.

The team redesigned each page to act as a starting point for the visitor journey, dispelling the assumption that people already knew about the website.

Examples of What Usability Testing Can Do

Before you conduct a usability test, it is crucial to understand different usability testing types to pick the one that suits your needs and resource availability.

There are mainly six types of usability testing:

1. Moderated & Unmoderated Usability Testing

| Moderated Usability Testing | Unmoderated Usability Testing |

|---|---|

| Carried out in the presence of a moderator who oversees the entire sessions. | Does not require a moderator. But you need a tool that can guide the participants through the test and display test instructions clearly. |

| Usually carried out in lab conditions. | Can be carried out in lab settings, or users’ environments like home, office, etc. |

| The moderator observes the user behavior and actions. So this method may or may not require a recording tool. | Requires a recording tool to collect data for analysis after the test. |

| Provides deeper insights than unmoderated testing as both the moderator and participant can exchange questions. | Insights can only be collected with predefined questions that are shown to the users with the help of the testing tool. |

2. Remote and in-Person Usability Testing

The difference between remote and unmoderated testing is that a moderator may be present during a remote usability test.

| In-person Usability Testing | Remote Usability Testing |

|---|---|

| It is carried out in the physical presence of a moderator in lab testing conditions. | Can be carried out over the internet or phone in the absence or presence of a moderator. |

| Expensive and takes more time than remote testing. | It is a cost-effective method as it does not require any physical venue and other preparations. |

| Provides in-depth feedback as the moderator can study the behavior of the user. | The data collected is limited as compared to in-person testing. |

| Can test only a few participants at a time. | Can test a large number of users simultaneously. |

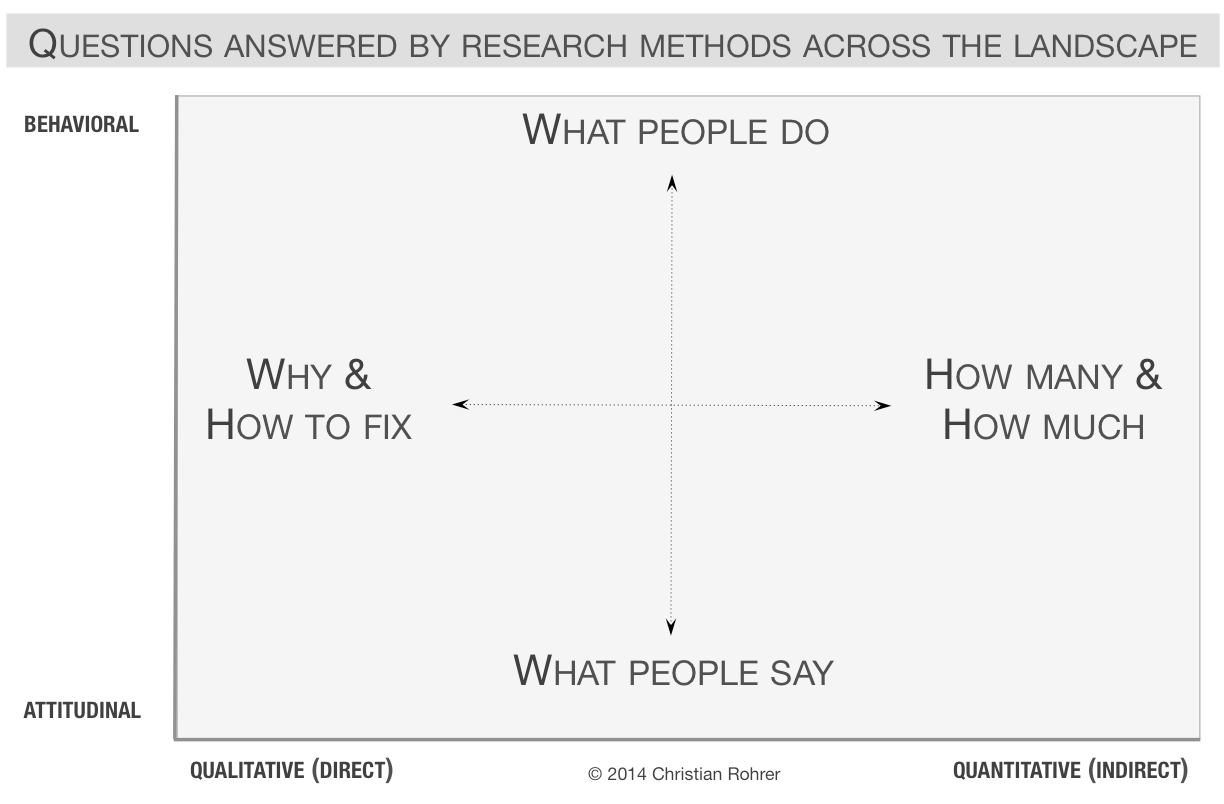

3. Qualitative and Quantitative Usability Testing

| Qualitative Usability Testing | Quantitative Usability Testing |

|---|---|

| Involves testing usability in terms of qualitative assessments such as easy or hard to use. | Measure quantifiable metrics such as task completion time, success rate, number of failures, etc. |

| A direct measure of product usability as it gives clear insights about issues and problems directly from the participants’ feedback. | An indirect method of testing the product usability as it provides the measurement of usability based on user performance. |

| Points out the exact problems that participants faced during the test. For example, 70% of the participants who failed a task provided feedback about the missing step in the checkout process. | Only provides direction to the problem area. For example, if 70% of users could not complete a task, it means there is a problem with the flow or design but what? |

| Findings are purely based on the moderator's experience, interpretations, and knowledge. They can change from moderator to moderator. | Provides statistically significant numbers that can be compared with successive testings. |

4. Benchmark or Comparison Usability Testing

Benchmark or Comparison testing is done to compare two or more design flows to find out which works best for the users.

For example, you can test two different designs for your shopping cart menu -

- one that appears after hovering the cursor over the cart icon

- the other that shows as a dropdown after clicking on it.

It is a great way to test different solutions to the same problem/issue to find the optimal solution preferred by your users.

You can run benchmark testing at any stage of your product development cycle.

Usability Testing Methods

Now that you know about usability tests types, let's discuss different usability testing methods.

Each method has different applicability and approach towards testing the participants. You can use multiple methods in conjunction to get deeper insights into your users.

1. Lab Testing

Lab usability tests are conducted in the presence of a moderator under controlled conditions. The users perform the tasks on the website, product, or software, and the moderator observes them making notes of their actions and behavior. The moderator may ask the users to explain their actions to collect more information.

The designers, developers, or other personnel related to the project may be present during the test as observers. They do not interfere with the testing conditions.

Advantages:

- It lets you observe the users closely and interact with them personally to collect in-depth insights.

- Since the test is performed under controlled conditions, it offers a standardized testing environment for all users.

- It is an excellent method to test product usability early in the development stage. You can also perform concept usability testing called paper prototyping using wireframes.

Limitations of lab usability testing

- It is one of the most expensive and time-consuming testing methods.

- The sample size is usually small (5-10 participants), which may not reflect the consensus of your entire customer base.

Tips for conducting effective lab usability tests:

- Always make the participants aware that you are testing the website or product and not testing them. It will help to alleviate the stress in the users' minds.

- Keep your inquiries neutral. Don't ask leading questions to the users. You can pose questions like 'tell me more about it, or do you have something to add about the task.'

- Prioritize user behavior over their answers. Sometimes the feedback does not reflect their actual experience. The user may have had a bad experience but provide four or five-star ratings to be polite.

2. Paper Prototyping

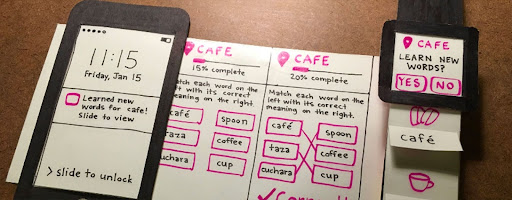

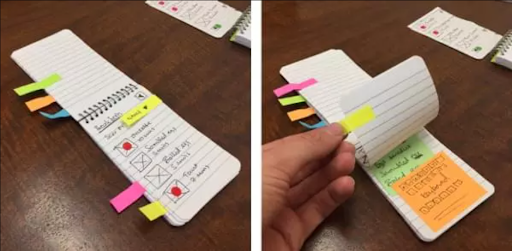

Paper prototyping is an early-stage lab usability testing performed before the product, website, or software is put into production. It uses wire diagrams and paper sketches of the design interface to perform the usability test.

Different paper screens are created for multiple scenarios in the test tasks. The participants are given the tasks, and they point to the elements they would click on the paper model interface. The human-computer (developer or moderator) then changes the paper sketches to bring a new layout, snippets, or dropdowns as it would occur in the product UI.

The moderator observes the user's behavior and may ask questions about their actions to get more information about the choices.

Example Usability Test with a Paper Prototype

Advantages

- One of the fastest and cheapest methods to optimize the design process.

- No coding or designing is required. It helps to test the usability of the design before putting effort into creating a working prototype.

- Since the usability issue is addressed in the planning phase, it saves time and effort in the development cycle.

Limitations

- The paper layouts are very time-consuming to prepare.

- It requires a controlled environment to perform the test properly, which adds more cost.

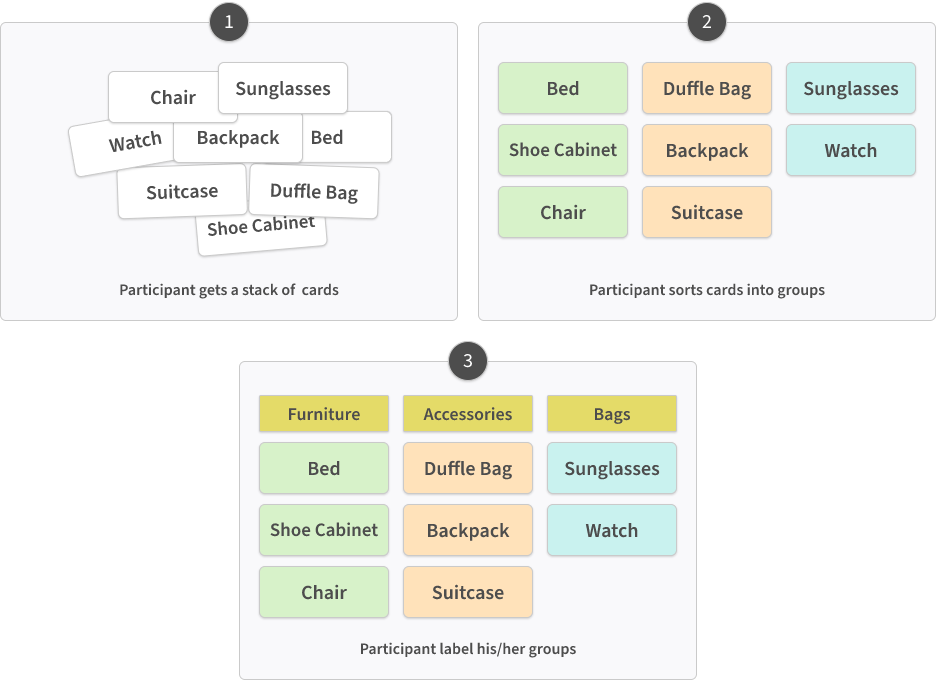

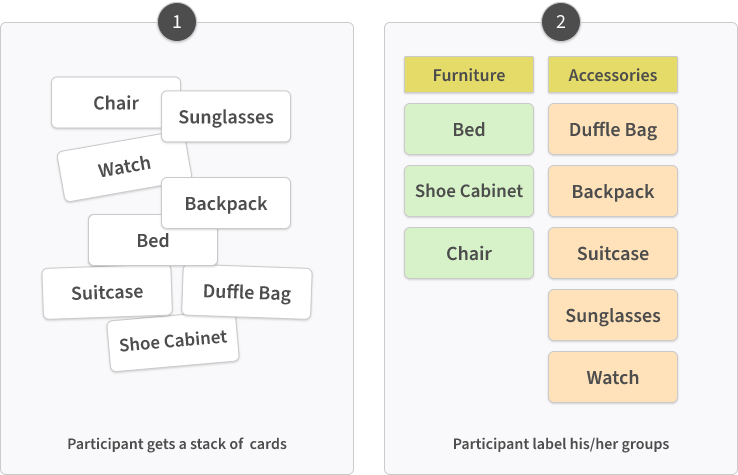

3. Moderated Card Sorting

Card sorting is helpful in optimizing the information architecture on your website, product, or software. This usability testing method lets you test how users view the information and its hierarchy on your website or product.

In moderated card sorting, the users are asked to organize different topics (labels) into categories. Once they are done, the moderator tries to find out the logic behind their grouping. Successful card sorting requires around 15 participants.

When to use card sorting for usability testing?

You can use card sorting to:

- Streamline the information architecture of your website or product.

- Design a new website or improve existing website design elements, such as a navigation menu.

Types of card sorting tests:

Whether it’s an moderated or unmoderated card sorting test, there are three types of card sorting tests:

| Open Card Sorting | Closed Card Sorting | Free Card Sorting |

|---|---|---|

| The labels (topics) are predefined. | The topics and categories are both predefined. | The participants create both the categories and the topics. |

| The participants are asked to create their categories and group the given topics into them. | The participants are asked to group the topic into the given categories. | Useful during the very early stage of development when you want to come up with a website design. |

| Useful when you have the topics in mind and want to see how users will process and group the information | Useful when you want to gauge how users will sort the given content into categories. |

Advantages of card sorting:

- It is a user-focused method that helps to create streamlined flows for users.

- It is one of the fastest and inexpensive ways to optimize the website information architecture.

Limitations

- It’s highly dependent on users’ perception, especially free sorting.

- There can be instances when each user has variable categories without common attributes, leading to a failed test.

4. Unmoderated Card Sorting

In unmoderated card sorting, the users sort the cards alone without a moderator. You can set up the test remotely or in lab conditions. It is much quicker and inexpensive than a moderated sorting method.

Unmoderated card sorting is usually done using an online sorting tool like Trello or Mural. The tool records the user behavior and actions for analysis later.

5. Tree Testing or Reverse Card Sorting

If card sorting helps you design the website hierarchy, tree testing lets you test the efficiency of a given website architecture design.

You can evaluate how easily users can find the information from the given categories, subcategories, and topics.

The participants are asked to use the categories and subcategories to locate the desired information in a given task. The moderator assesses the user behavior and the time taken to find the information.

You can use Tree testing to:

- Test if the designed groups make sense to the people.

- See if the categories are easy to navigate.

- Find out what problems people face while using the information hierarchy.

Example of Card Sorting & Tree Sorting

6. Guerilla Testing

Guerilla testing requires you to approach random people outside, such as parks, coffee houses, or any other public area, and ask them to take the test. Since it eliminates the need to find qualified participants and a testing venue, it is one of the most time-efficient and cost-effective testing methods to collect rich insights about your design prototype or the concept itself. The acceptable sample size is between 6 to 12 participants.

Advantages

- It can be used as an ad hoc usability testing method to gather user insights during the early stages of development.

- It is an inexpensive and fast way of collecting feedback as you don’t need to hire a specific target audience or moderator to conduct the test.

- You can even use the paper prototype to conduct guerilla testing to optimize your design.

Limitations

- Since participants are chosen at random, they may not represent your actual audience sample.

- The test needs to be short in length as people may be reluctant to give much time for the test. The usual length for guerilla testing is 10-15 minutes per session.

7. Session Recordings or Screen Recording

Session recording is a very effective way to visualize the user interactions on your functional website or product. It is one of the best unmoderated remote usability testing methods to identify the visitors' pain points, bugs, and other issues that might prevent them from completing the actions.

This type of testing requires screen recording tools such as SessionCam. Once set up, the tool anonymously records the users' actions on your website or product. You can analyze the recording later to evaluate usability and user experience.

It can help you visualize the user's journey to examine the checkout process, perform form analysis, uncover bugs and broken pathways, or any other issues leading to a negative experience.

Advantages

- It doesn't require hiring the participants. You can test the website using your core audience.

- Since the data is collected anonymously, the visitors are not interrupted at any time.

- You can use screen recording in conjunction with other methods like surveys to explore the reasons behind users' actions and collect their feedback.

Remote Screen recording with qualified participants

You can also use screen recording using specific participants and the think-aloud method, where people say their thoughts aloud as they perform the given tasks during the test.

In this method, the participants are selected and briefed before the test. It requires more resources than anonymous session recording. It's a fantastic method to collect in-the-moment feedback and actual thoughts of the participants.

8. Eye-Tracking Usability Test

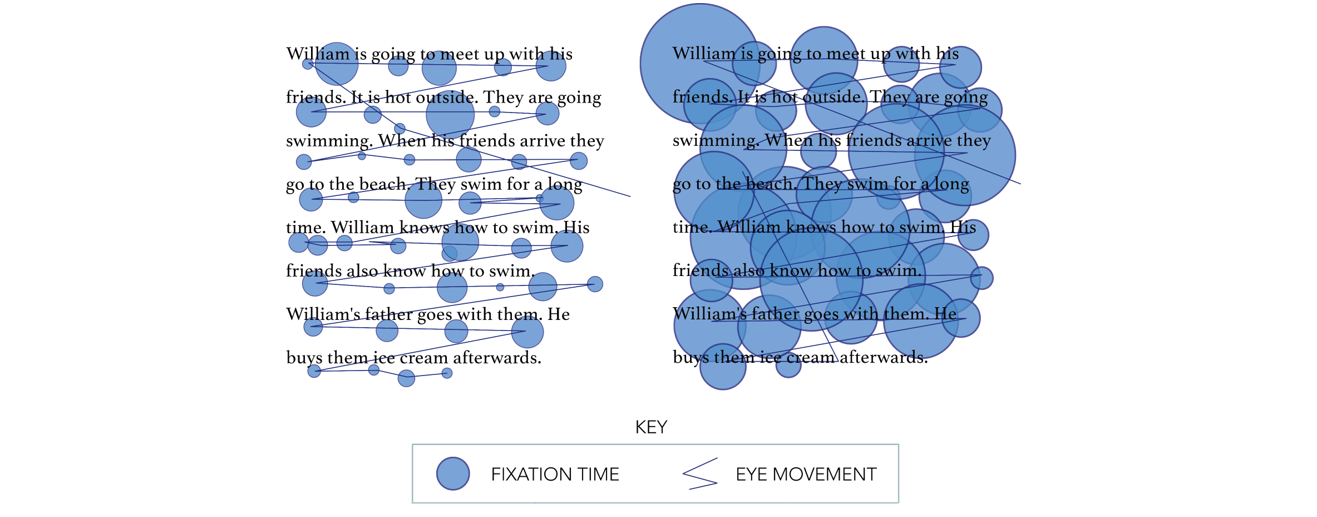

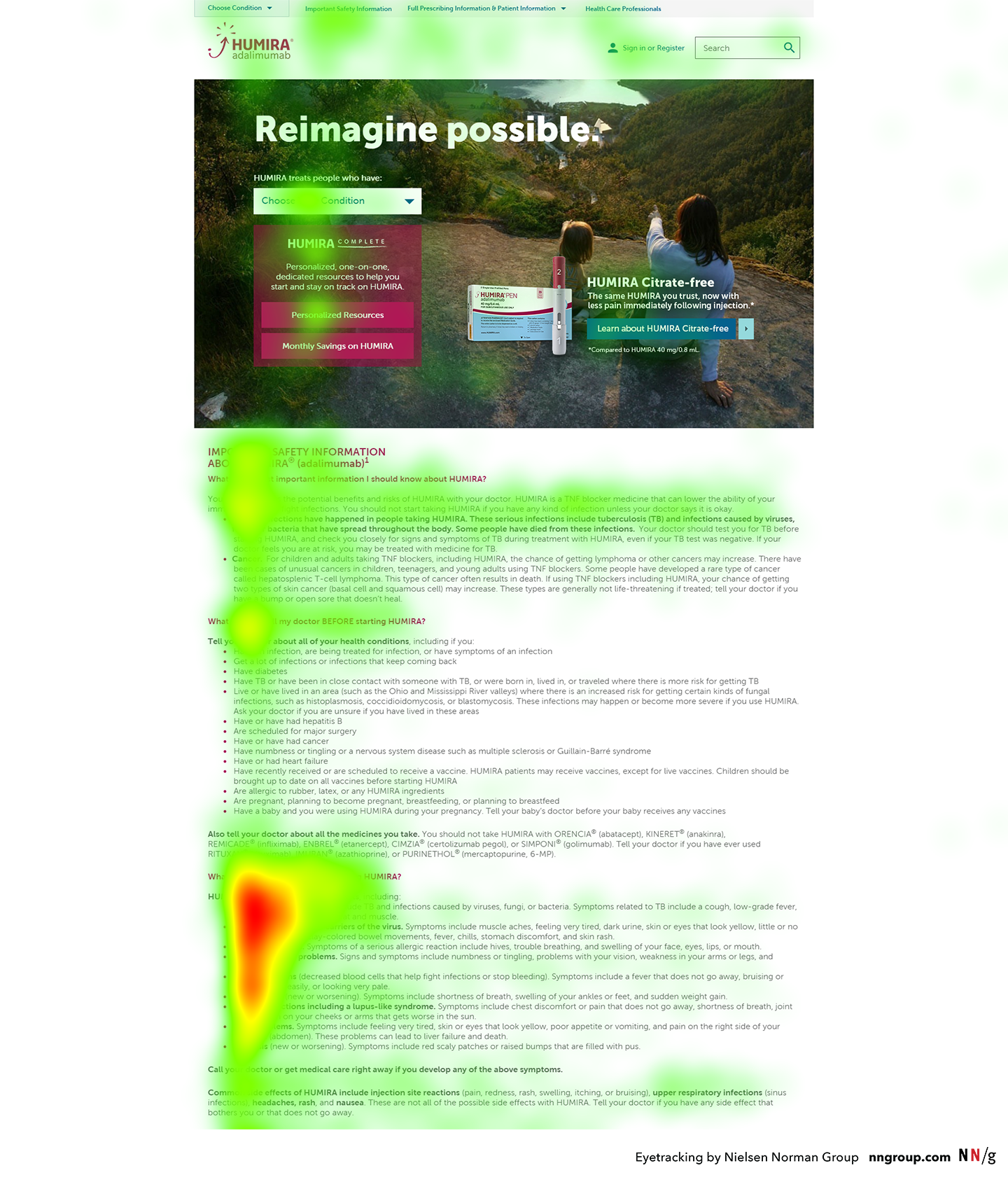

Eye-tracking testing utilizes a pupil tracking device to monitor participants' eye movements as they perform the tasks on your website or product. Like session recording, it is an advanced testing technique that can help you collect nuanced information often missed by inquiry or manual observation.

The eye-tracking device follows the users' eye movements to measure the location and duration of a user's gaze on your website elements.

The results are rendered in the form of:

- Gaze plots (pathway diagrams) - The size of the bubble represents the duration of the user's gaze at the point.

- Gaze replays - Recording of how the user processed the page and its elements.

- Heatmaps - A color spectrum indicating the most gazed portions of the webpage by all the users. You may have to test up to 39 users to create reliable heatmaps.

This type of testing is useful when you want to understand how users perceive the design UI.

- You can evaluate how users are scanning your website pages.

- Identify the elements that stand out and grab users' attention first.

- Identify the ideal portions of the website that attract users to place your CTAs, banners, and messaging.

9. Expert Reviews

Expert reviews involve a UX expert to review the website or product for usability and compliance issues.

There are different ways to conduct an expert review:

- Heuristic evaluation - The UX expert examines the website or product against the accepted heuristic usability principles.

- Cognitive psychology - The expert reviews the design from a user's perspective to gauge the system's usability.

A typical expert review comprises of following elements:

- Compilation of areas where the design excels in usability.

- List of points where the usability heuristics and compliance standards fail.

- Possible fixes for the indicated usability problems

- Criticality of the usability issues to help the team prioritize the optimization process.

An expert review can be conducted at any stage of the product development. It is an excellent method to uncover the issues with product design and other elements quickly.

But since it requires industry experts and in-depth planning, this type of testing can add substantial cost and time to your design cycle.

10. Automated Usability Evaluation

This last method is more of a proof of concept than a working usability testing methodology. Various papers and studies call for an automated usability tool that can iron out the limitations of conventional testing methods.

Here are two interesting studies that outline the possibilities and applications of an automated usability testing framework.

Conventional testing methods, though effective, carry various shortcomings such as inefficiency of the moderator, high resource demands, time consumption, and observer bias.

With automated usability testing, the tool would be self-sufficient to conduct the following functions:

- Point out major usability issues just like conventional methods.

- Carry out analysis and calculations by itself, providing quicker results.

- Provide more accurate and reliable data.

- Allow for increased flexibility and customization of the test settings to favor all the stages of development.

It will allow developers and researchers to reduce development time as the testing and optimization iterations could be carried simultaneously.

When should you conduct a usability test?

One of the common questions asked about usability testing is - 'when can I do it?' The answer is anytime during the product life cycle. It means during the planning stage, design stage, and even after release.

1. Usability Testing During the Planning Stage

Whether you are creating a new product or redesigning it, conducting usability tests during the planning or initial design stage can reveal useful information that can prevent you from wasting time in the wrong place.

It's when you are coming up with the idea of the product or website design. So testing it out can help you dispel initial assumptions and refine the product flows while still on paper.

For example, you can test whether the information architecture you are planning will be easy to understand and navigate for users. Since nothing is committed, it will help restructure it if needed without much effort.

You can use usability testing methods like paper prototyping, lab testing, and card sorting to test your design concept.

2. Usability Testing During the Design or Development Stage

Now that you have moved into the development stage and produced a working prototype, you can conduct tests to do behavioral research.

At this point, usability testing aims to find out how the functionality and design come together for the users.

- With a clickable prototype, you can uncover issues with flows as well as design elements.

- You can study actual user behavior as they interact with your product or website to gain deeper insights into their actions.

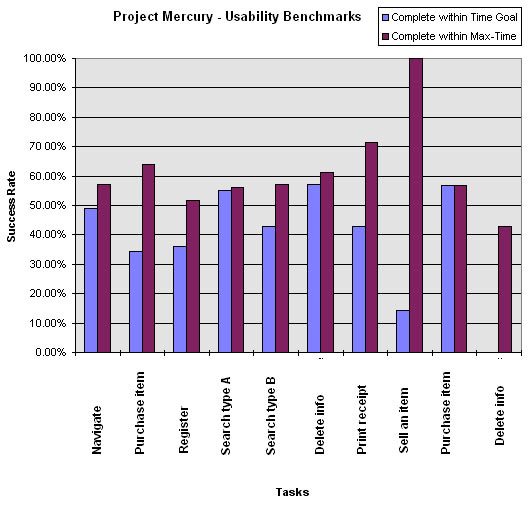

While usability test during the planning stage gives you qualitative insights, with design prototype, you can measure quantitative metrics as well to measure the product's usability such as:

- Task completion time

- Success rate

- Number of clicks or scrolls

This data can help validate the design and make the necessary adjustments to the process flows before continuing to the next phase of development.

3. Usability Testing After Product Release

There is always room for improvement, so usability testing is as crucial after product launch as well.

You may want to optimize the current design or add new features to improve the product or website.

It is beneficial to test the redesign or update for usability issues before deploying it. It will help to evaluate if the new planned update works better or worse than the current design.

How many tests to do?

Running a successful usability test depends on multiple factors such as time constraints, budget, and tools available at your disposal.

Though each usability testing method has a slightly different approach due to the testing conditions and depth of research, they share some common attributes, as explained in this section.

Let’s explore the eight common steps to conduct a usability test.

Step 1: Determine What to Test

Irrespective of the usability testing method, the first step is to draw the plan for the test. It includes finding out what to test on your website or product.

It can be the navigation menu, checkout flow, new landing page design, or any other crucial process.

If it is a new website design, you probably have the design flow in mind. You can create a prototype or wire diagram depicting the test elements.

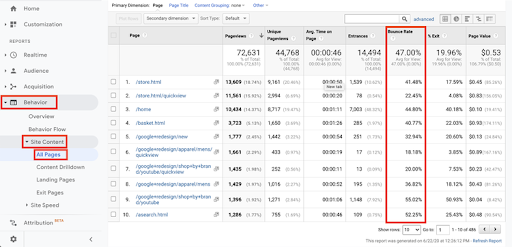

But if you are trying to test the usability of an existing website or product flow, you can use the data from various tools to find friction points.

a. Google Analytics (GA)

Use the GA reports and charts as the starting point to narrow your scope. You can locate the pages with low conversions and high bounce rates, compare the difference between desktop vs. mobile website performance, and compare the traffic sources and other details.

b. Survey feedback

The next step is to deploy surveys at the desired points and use the survey feedback to uncover the issues and problems with these pages.

- It can be an issue with the navigation menu.

- Payment problems during checkout like payment failure or pending order status even after successful payment.

- Issues with the shopping cart.

- Missing feature on the website or webpage

You can choose from the below list of different types of survey tools based on your requirements:

1. 25 Best Online Survey Tools & Software

2. Best Customer Feedback Tools

3. 30 Best Website Feedback Tools You Need

4. 11 Best Mobile In-App Feedback Tools

c. Tickets, emails, and other communication mediums

Complete the circle by collating the data from tickets, live chat, emails, and other interaction points.

These can be valuable, especially when you are hosting a SaaS product. Customers’ emails can reveal helpful information about bugs and glitches in the process flows and other elements.

Once you have the data, compile it under a single screen and start marking the issues based on the number of times mentioned, criticality, number of requests, and other factors. It will let you set the priority to choose the element for the test. Plus, it will help set clear measurement goals.

Step 2: Set Target Goals

It is necessary to set the goals for the test to examine its success or failure. The test goal can be qualitative or quantitative in nature, depending on what you want to test.

Let's say you want to test the usability of your navigation menu. Start by asking questions to yourself to identify the purpose of your test. For example,

- Are users able to find the 'register your product' tab easily?

- What is the first thing users notice when they land on the page?

- How much time does it take to find the customer support tab in the navigation?

- Is the menu easy to understand?

Once you have the specific goals in mind, assign suitable metrics to measure during the test.

For example:

- Successful task completion: Whether the participants were able to complete the given task or not.

- Time to complete a task: The time taken to complete the given task.

- Error-free rate: The number of participants who were able to complete the task without making any error.

- Customer ratings and feedback: Customer feedback after completing the task or test, such as satisfaction ratings, ease of use, star ratings, etc.

These metrics will help to establish the outcome of the test and plan the iteration.

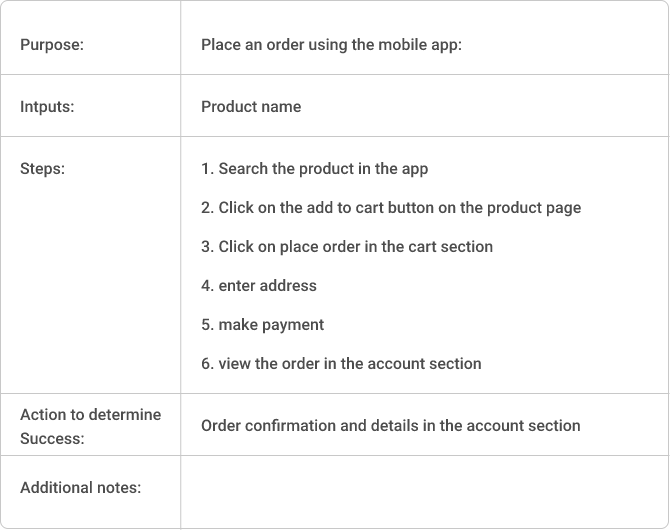

Here is a sample goal template you can use in the usability test

This is how it will look once filled:

Step 3: Identify the Best Method

The next step is to find the most suited method to run the test and plan the essential elements for the chosen usability testing method.

For example:

- If you are in the initial stage of design, you can use paper prototyping.

- If it is a new product, go for lab usability testing to get detailed information into user behavior and product usability.

- If you want to restructure the website hierarchy, you can use card sorting to observe how users interact with the new information structure.

- If it is proprietary software, you can also conduct an expert review to see if it meets all the compliance measures.

Once you have decided on the method, it is time to think about the overheads.

- If it is an in-person moderated test, you need a moderator, venue, and participants. You would also need to calculate the length for each session and the equipment required.

- If it is a remote moderated task, find the right tool to run the test. It should be able to connect the moderator and participants through a suitable medium like phone or video. At the same time, it should allow the moderator to observe the participants' behavior and actions to ask follow-up questions.

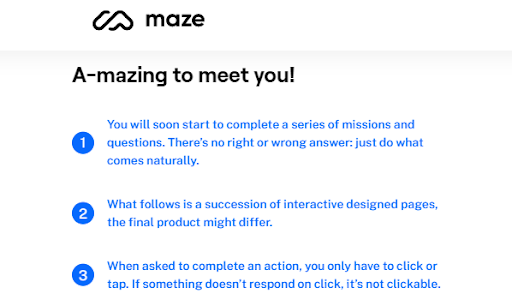

- If it is a remote unmoderated test, the usability testing tool would have to explain the instructions, schedule the tasks, guide the participants to each task and record the necessary behavioral attributes simultaneously.

Step 4: Write the Usability Tasks

Writing Pre-test Script

Along with the task for the actual test, prepare a pre-test and introductory script to get to know about the participant (user persona) and tell them the purpose of the usability test. You can create scenarios to help the participants relate the product or website to their real-world experience.

Suppose you are testing a SaaS-based project management system. You can use the following warm up questions to build user personas:

- What is your current role at your company?

- Have you used project management software before?

- If yes, for how long? Are you currently using it?

- If no, do you know what a project management system does?

Use the information to introduce the participant to the test's purpose and tell them about the product if they have never heard of the concept.

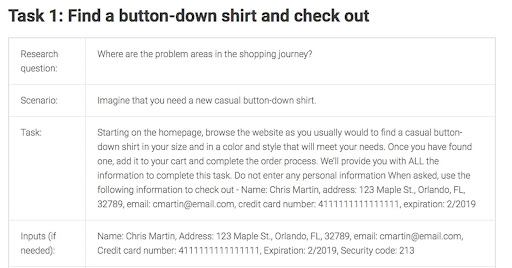

Writing the Test Tasks

Probably the most important part of usability testing; tasks are designed as scenarios that prompt the participant to find the required information, get to a specific product page, or any other action.

The task can be a realistic scenario, straightforward instructions to complete a goal or a use case.

Pro tips: Use the data from customer feedback and knowledge of customer behavior to come up with practical tasks.

Using the previous example, let's say you have to create a task for usability testing of your project management tool. You can use first scenarios like this:

'You are a manager of a dev-ops team with 20 people. You have to add each team member to your main project - 'Theme development.' How will you do it?'

This scenario will help you assess the following:

- Can the participant navigate the menu to find the teams section from the navigation menu?

- Can they find the correct project in the team menu, which shows the project name - Theme development?

- How fast can they find the required setting?

The second scenario can be;

'Once you have added the team members, you want to assign a task to two lead developers, Jon and Claire, under the theme development project. The deadline for the task needs to be next Friday. How will you do it?

Use this scenario to test the following:

- How easy is it to navigate the menu?

- Is the design of the task form easy to follow?

- How easily can the participant find all the fields in the task form, such as deadline, task name, developer name, etc.?

If the test is moderated, ask follow-up questions to find the reason behind user actions. If the test is unmoderated, use a screen recording tool or eye-tracking mechanism to record users' actions.

Remember the sequence of the task and the associated scenario will depend on the elements you want to test for usability.

For example:

- For project management software, the primary function is to assign tasks, track productivity and monitor the deadlines.

- For an e-commerce website, the main function is conversions, so your tasks and scenarios would be oriented towards letting the users place an order on the website.

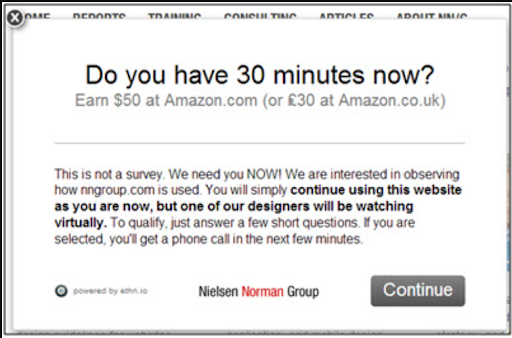

Step 5: Find the Participants

There are multiple ways to choose the participants for your usability test.

- Use your website audience: If you have a website, you can add survey popups to screen the visitors and recruit the right participants for the test. Once you have the required number of submissions, you can stop the popup.

- Recruit from your social media platforms: You can also use the social channel to find the right participants.

- Hire an agency: You can use a professional agency to find the participants, especially if you are looking for SMEs and a specific target audience, like people working in the IT industry who have experience with a project management tool.

To increase the chances of participation, always add an incentive for your participants, such as gift cards or discounts codes.

Step 6: Run a Pilot Test

With everything in place, it is time to run a pre-test simulation to see if everything is working as intended or not. A pilot test can help you find issues with the scenarios, equipment, or other test-related processes. It is a quality check of your usability test preparation.

- Choose a candidate who is not related to the project. It can be a random person or a member of a different team not involved with the project.

- Perform the test as if they were the actual participant. Go through all the test sessions and equipment to check everything works fine.

With pilot testing, you can check :

- If the scenarios are task-focused and easy to understand.

- If there are any faulty equipment

- The pre and post-test questions are up to mark.

- The testing conditions are ideal.

Step 7: Conduct the Usability Test

If it is an in-person moderated test, start with the warmup questions and introductions from the pre-test script. Make sure the participants are relaxed.

Start with an easier task to help the participants feel comfortable. Then transition into more specific tasks. Make sure to ask for their feedback and explore the reasons behind their actions, such as;

- How was your experience completing this task?

- What did you think of the design overall?

- Would you like to say something about the task?

For the remote unmoderated tests, make sure that the instructions are clear and concise for the participants.

You can also include post-test questions for the participants, such as;

- Did I forget to ask you about anything?

- What are the three things you liked the most about the product/website/software?

- Did it lack anything?

- On a scale from 1 to 10, how easy was the product/website/software to use?

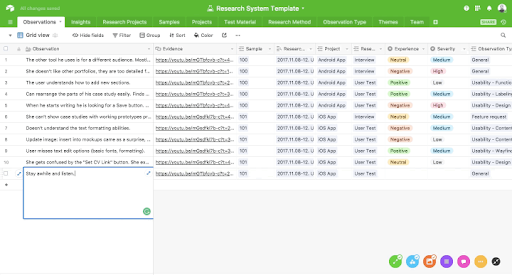

Step 8: Analyze the Results

Once the test is over, it is time to analyze the results and turn the raw data into actionable insights.

a. Start by going over the recordings, notes, transcripts, and organize the data points under a single spreadsheet. Note down each error encountered by the user and associated task.

b. One way to organize your data is to list the tasks in one column, the issues encountered in the tasks in the next column, and then add the participant's name next to each issue. It will help you point out how many users faced the same problem.

c. Also, calculate the quantitative metrics for each task, such as success rate, average completion time, error-free rate, satisfaction ratings, and others. It will help you track the goals of the test as defined in point 2.

d. Next, mark each issue based on its criticality. According to NNGroup, the issues can be graded on five severity ratings ranging from 0-4 based on their frequency, impact, and persistence:

0 = I don't agree that this is a usability problem at all

1 = Cosmetic problem only: Need not be fixed unless extra time is available on project

2 = Minor usability problem: Fixing this should be given low priority

3 = Major usability problem: Important to fix, so should be given high priority

4 = Usability catastrophe: Imperative to fix this before product can be released

e. Create a final report detailing the highest priority issues on the top and lowest priority problems at the bottom. Add a short, clear description of each issue, where and how it occurred. You can add evidence like recordings to help the team to reproduce it at their end.

f. Add the proposed solutions to your report. Take the help of other teams to discuss the issues, find out the possible solutions, and include them in the usability testing report.

g. Once done, share the report with different teams to optimize the product/website/software for improving usability.

The 9 Phases of a Usability Study

Do you feel that you know everything there is to know about your product and its users?

If you answer with a yes, then what is the purpose of a usability test, you may ask.

No matter how much you know about your customers, it isn’t wise to ignore the possibility that there is more to be learned about them, or about any shortcomings in your product.

That is why what you ask, when you ask, and how you ask is of uttermost importance.

Here are a few examples of usability testing questions to help you form your own.

Questions for ‘first glance’ testing

Check if your design communicates what the product/website is at first glance.

- What do you think this tool/ website is for?

- What do you think you can do on this website/ in this app?

- When (in what situations) do you think would you use this?

- Who do you think this tool is for? / Does this tool suit your purposes?

- Does this tool resemble anything else you have seen before? If yes, what?

- What, if anything, doesn’t make sense here? Feel free to type in this text box.

Pro-Tip: If you’re testing digitally with a feedback tool like Qualaroo, you can even time your questions to pop up after a pre-set time spent on-site for a more accurate first glance test.

Questions for specific tasks or use cases

Develop task-specific questions for common user actions (depending upon your industry).

- How did you recognize that the product was on sale? (E-commerce and retail)

- What information did you feel was missing, if any? (E-commerce and retail)

- What payment methods would you like to be added to those already accepted? (E-commerce and retail/SaaS)

- How did you decide that the plan you have picked was the right one for you? (SaaS)

- Do you think booking a flight on this website was easier or more difficult than on other websites you have used in the past? (Travel)

- Did sending money via this app feel safe? (Banking/FinTech)

- Do you think data gathered by this app is reliable, safe, and secure from breaches or hacks? (Internet)

Pro-tip: If you want to test the ease with which users perform specific tasks (like the ones listed above), consider structuring your tasks as scenarios instead of questions.

Questions for assessing product usability

Ask these questions after users complete test tasks to understand usability better.

- Was there anything that surprised you? If yes, what?

- Was there anything you expected but was not there?

- What was difficult or strange about this task, if anything?

- What did you find easiest about this task?

- Did you find everything you were looking for? / What was missing, if anything?

- Was there anything that didn’t look the way you expected? If so, what was it?

- What was unnecessary, if anything? / Was anything out of place? If so, what was it?

- If you had a magic wand, what would you change about this experience/task?

- How would you rate the difficulty level of this task?

- Did it take you more or less time than you expected to complete this task?

- Would you normally spend this amount of time on doing this task?

Pro-tip: If you are getting users to complete more than one task, limit yourself to no more than 3 questions after each task to help prevent survey fatigue.

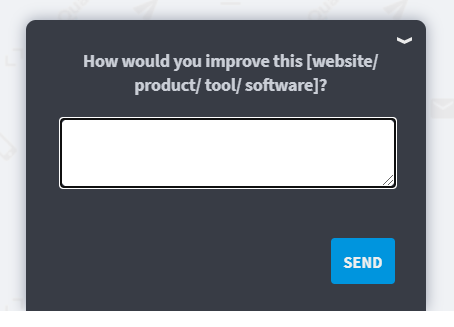

Questions for evaluating the holistic (overall) user experience

Finalize testing with broad questions that collect new information you haven’t considered.

- Try to list the features you saw in our tool/product.

- Do you feel this application/tool/website is easy to use?

- What would you change in this application/website, if anything?

- How would you improve this tool/website/service?

- Would you be interested in participating in future research?

Pro-tip: No matter which way you phrase your final questions, we recommend using an open-ended answer format so that you can provide users with a space to share feedback more freely. Doing so allows them to flesh out their experience during testing and might even inadvertently entice them to bring up issues that you may never have considered.

Best practices for usability testing

If you’re wondering how to conduct usability testing for the first time or without having to jump through hoops and loops of code, you can simply stroll over to Qualaroo’s survey templates.

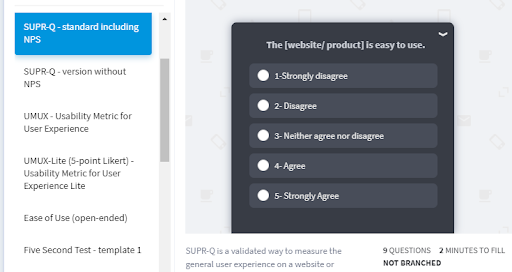

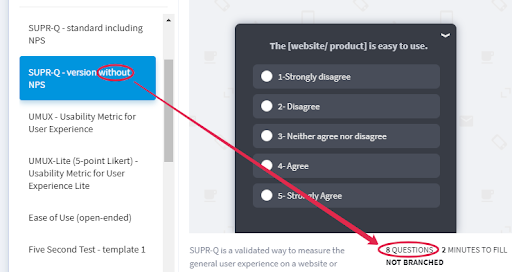

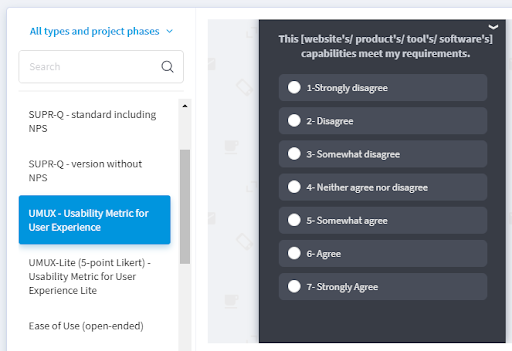

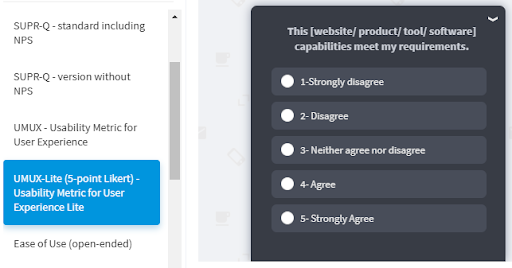

We have created customizable templates for usability testing, like SUPR-Q (Standardized User Experience Percentile Rank Questionnaire - with or without NPS), UMUX (Usability Metric for User Experience - 2 positive & 2 negative statements), and UMUX Lite (2 positive statements).

SUPR-Q is a validated way to measure the general user experience on a website or application. It includes 8 questions within 4 areas: usability, trust/credibility, loyalty (including NPS), and appearance. However, it doesn’t identify bottlenecks or problems with navigation or specific elements of the interface. 50 is the comparison benchmark for assessing your product’s UX.

UMUX allows you to measure the general usability of a product (software, website, or app). It has 4 statements for users to rate on a 5- or 7-point Likert scale. However, it isn’t generally used to measure specific characteristics like usefulness or accessibility, nor for identifying navigation issues, bottlenecks, or problems that are related to specific elements of your product’s interface.

On a related note, if you have launched a product aimed specifically at smartphone users and you wish to understand the contextual in-app user experience (UX), simply take these 3 steps.

Usability Testing Script & Questions

Even though there are multiple usability test methods, they share some general guidelines to ensure the accuracy of the test and results. Let’s discuss some of the do’s and don’ts to keep in mind while planning and conducting a usability test.

1. Always Do a Pilot Test

The first thing to remember is to do a quality check of the usability test before going live. You wouldn't want anything to fall apart during actual testing. Be it a broken link, faulty equipment, or ineffective questions/tasks. It would mean a wastage of time and resources.

Use a testee who is not associated with the usability test. It can be a member of another team in your organization. Run the usability test simulation under the actual conditions to gauge the efficiency of your tasks and prototype. Pilot testing can help to uncover previously undetected bugs.

It can help to:

- Measure the session time, inspect the quality of the equipment and other parameters.

- Test whether the prototype is designed according to the task. You can add missing flows or elements.

- Test the questions and tasks. You can gauge whether the user understands them easily or not. Are the scenarios clear or not?

2. Leverage the Observer Position

It is always a good practice to let your team attend the usability test as observers. It can produce a two-pronged effect:

- The team can learn firsthand about user experience and how people interact with your product.

- It can help them follow the user journey and observe the points of friction which can aid them in coming up with the optimal solutions keeping the user journey in mind.

Pro tip: Be cautious as not to disturb or talk to the participants. The observer's role is to be invisible.

3. Determine the Sample Size for Your Usability Test

According to NNGroup, five users in any usability test can reveal 75% of the usability problems.

However, considering other external factors, there are different acceptable sample sizes for different usability testing methods.

For example:

- Guerilla testing would require 5-8 testees.

- Eye-tracking requires at least 39 users.

- Card sorting can produce reliable results with 15 participants.

Pro tip: If you aim to measure usability for multiple audience segments, the sample size would increase accordingly to include representation for each segment.

So, use the correct sample size for your usability test to make the results statistically significant.

4. Recruit More Users

Not all participants may show up for the usability test, whether it is in-person or remote. That's why it is helpful to recruit more participants than your target sample size. It will ensure that testing reaches the required statistical significance and you obtain reliable results.

5. Always Have a Quick Post-Session Questionnaire

A post-test interview is a potential gold mine to collect deeper insights into user behavior. The users are more relaxed than during the test to provide meaningful feedback about their difficulties in performing the task, delights about the product, and overall experience. Plus, it also presents the opportunity to ask follow-up questions that you may have missed during the tasks.

Pro tip: The best way is to calculate the total session time by including the post-test interview period in it. For example, if you are planning each session to be 10-15 minutes long, keep 2-3 minutes for post-test questions.

6. Make the Participants Feel Comfortable

If your participants are nervous or stressed out, they won't be able to perform the tasks in the best way, which means skewed test results. So, try to make the participants feel relaxed before they start the test.

One way is to have a small introduction round during the pre-test session. Instead of strictly adhering to the question sheet, ask a few general friendly questions as the moderator to establish a relationship with the user. From there, you can smoothly transition into the testing phase without putting too much pressure on them.

7. Mitigate the Observer Effect

The “observer effect” or “Hawthorne Effect'' is when people in studies change their behavior because they are being watched. In moderated usability testing, the participants may get nervous or shy away from being critical about the product. They may not share their actual feedback or ask questions that may come to their mind. All these behavioral traits may lead to test failure or unreliable test results.

So, make sure that the moderator does not influence the participants. A simple trick is to pretend that you are writing something instead of constantly watching over the participants.

The observer effect is one more reason to have a friendly pre-test conversation and tell the participants to ask questions when they don't understand something and share their feedback openly. Discuss the test's purpose so they understand their feedback is valuable to make the product better.

Qualaroo Templates for Usability Testing

The overarching purpose of this usability testing guide was to help answer this essential question: how do I create the best product that satisfies customers (and as a bonus, outshines the competition)? We hope it threw a bright light on the possible ways to answer the question. Plus, here are a few pitfalls that are best avoided as you search for the answers:

1. Creating Incorrect or Convoluted Scenarios

The success of the usability test depends on the tasks and scenarios you provide to the participants. If the scenarios are hard to understand, the participants may get confused. It will lead to a drop in task success rate but not due to problems with usability but the questions. The problem may get compounded in unmoderated usability testing where the participant cannot approach a moderator if stuck.

That's why it is essential to keep your sentences concise and clear so that the tester can follow the instructions easily. A pilot test is an excellent way to test the quality of the questions and make changes.

2. Asking Leading Questions

Leading questions are those that carry a response bias in them. These questions can unintentionally steer the participants in a specific direction. It can point towards a step that you may want the participants to take or an element you want them to select.

Leading questions nullify the usability test as they help the participants to reach the answer. So, test your scenarios and questions during the pilot run to weed out such questions (if any).

3. Not Assigning Proper Goals and Metrics

It is essential to set the goals clearly to deliver a successful usability test. Whether the goals are qualitative or quantitative, assign suitable metrics to measure them properly.

For example, if the task aims to test the usability of your navigation menu, you may want to see whether the users can find the information or not. But to quantify this assessment, you can also calculate the success rate and time taken by participants to complete their tasks.

While the qualitative analysis will reveal points about user experience, the quantitative data will help calculate the reliability of your findings. In this way, you can approach the test results objectively.

4. Testing With Incorrect Audience

One of the biggest mistakes in usability testing is using an incorrect audience sample, which leads to inaccurate results.

For example, If you use your friends or coworkers who may know about the product/software, they may not face the same problems that actual first-time users may experience.

In the same way, if they are entirely unaware of the product fundamentals, they might get stuck at points which your actual audience may easily navigate.

To recruit the right audience, start by focusing on the question - who will be the actual users of the test elements? Are they new users, verified customers, or any other user segment?

Once you have your answers, you can set the proper goals for the test.

5. Interrupting or Interacting With the Participants

Another grave mistake is repeatedly interrupting the participants. The usability test is aimed at observing the users testing the product/software without any outer influence. The moderator can ask the questions and guide them if necessary.

Constantly bugging the participants may make them nervous or frustrated, disturbing the testing environment and providing false results.

6. Not Running a Pilot Test

As mentioned before, a pilot test is a must in usability testing. It helps weed out the issues with your test conditions, scenarios, equipment, test prototype, and other elements.

With pilot testing, you can sort out these problems before you start the test.

7. Guiding Users During the Test

The purpose of usability testing is to simulate actual user behavior to measure how easy it would be for them to use the product. If you guide the users through the scenario, you are compromising the test results.

The moderator can help the participants understand the scenario, but they may not help them complete the task or point towards the solution.

8. Forming Premature Conclusions

Another mistake to avoid is to draw conclusions from the test result of the first two or three users. It is necessary to be vigilant towards all the participants before concluding any presumptions.

Also, do not rush the testing process. You may be tempted to feel that you have all the information after testing a few participants but do not rush the test. Scan all the participants to establish the reliability of your results. It may point you towards new issues and problems.

Bonus Read: 30 Best A/B Testing Tools Compared

9. Running Only One Testing Phase

Experimentation and testing is an iterative process. Plus. since the sample size in usability testing is usually small, it is a big mistake to treat the results from one test phase as definitive.

The same problem is with the implemented solution of the issues found in the test. There can be many solutions to a single problem, so how will you know which one will work the best?

The only way to find out is to run successive tests after implementing the solution to optimize product usability. Without iterations, you cannot tell if the new solution is better or worse than the previous one.

Mistakes to Avoid in Usability Testing

It is true what they say: experience is the best teacher. As you do more tests, you will gain a better understanding of what usability testing actually is about - creating a perfect product.

It stands to reason that the easier it will be for your prospective customers to use your product, the more sales you will see. Usability testing helps you eliminate any unforeseen glitches and improve user experience by collecting pertinent user feedback for actionable insights. To get the best insights, Qualaroo makes usability testing a delightful experience for your testers.

Do you want to run usability tests?

Qualaroo surveys gather insights from real users & improve product design