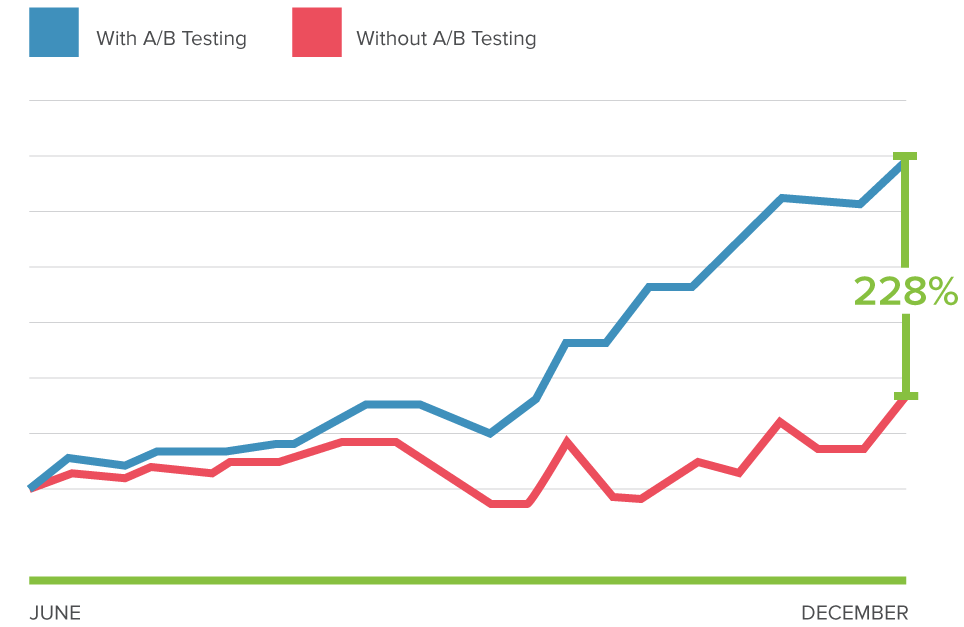

We live in an era where data is everything, and entrepreneurs heavily rely on data-driven decision making.

When it comes to analyzing and improving your website’s performance, one of the powerful ways to collect data is A/B testing. Yet many people avoid doing it, fearing the cost and resources involved, or they are not sure how to go about it. This guide solves such dilemmas once and for all by compiling all the information you need in a practical way so that you can get started with A/B testing right away!

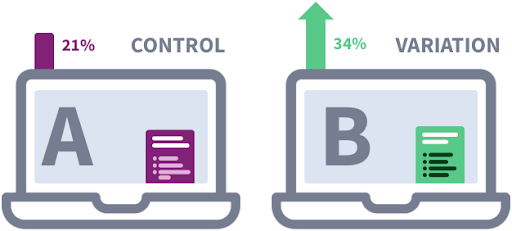

A/B Testing is the process of comparing the original web page design called ‘control’ with its altered version called ‘variation’ to see which design yields the best results. The variation is created by altering a page element in terms of structure, position, function, or content to test against the ‘control.’

A/B testing is often used in marketing to determine which marketing message or offer is most effective at improving response rates. On the web, A/B testing is used in Website Optimization to determine which variations of a page element improve conversion rates the most.

Online companies commonly use A/B testing to improve the performance of their website and marketing campaigns because it is relatively easy to create and run tests by updating the website code or design.

A/B testing isn’t just for marketing; however, product teams can A/B test different product variations, customer service can A/B test various responses, and more.

Apart from A/B testing, there are three other experimentation techniques - multivariate testing, split URL testing, and multi-page testing. Let's quickly go through these and understand the difference between each of them.

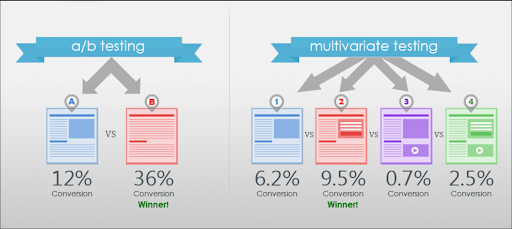

1. Multivariate Testing

Multivariate testing makes it possible to test multiple elements and their combinations on the web page simultaneously. It is like running simultaneous A/B tests on the desired webpage.

With A/B testing, you can only test one element at a time. If you test more than one element, you cannot study each element's impact on the results.

That is where multivariate testing comes in handy. You can test several elements in one test, and it also tells you how each element and combination affect the outcome.

However, it requires more time and effort than A/B testing. An increase in the number of elements and their possible combinations will also decrease the traffic allocation to each page. It means you would need to run the test for a longer duration to reach the desired sample size.

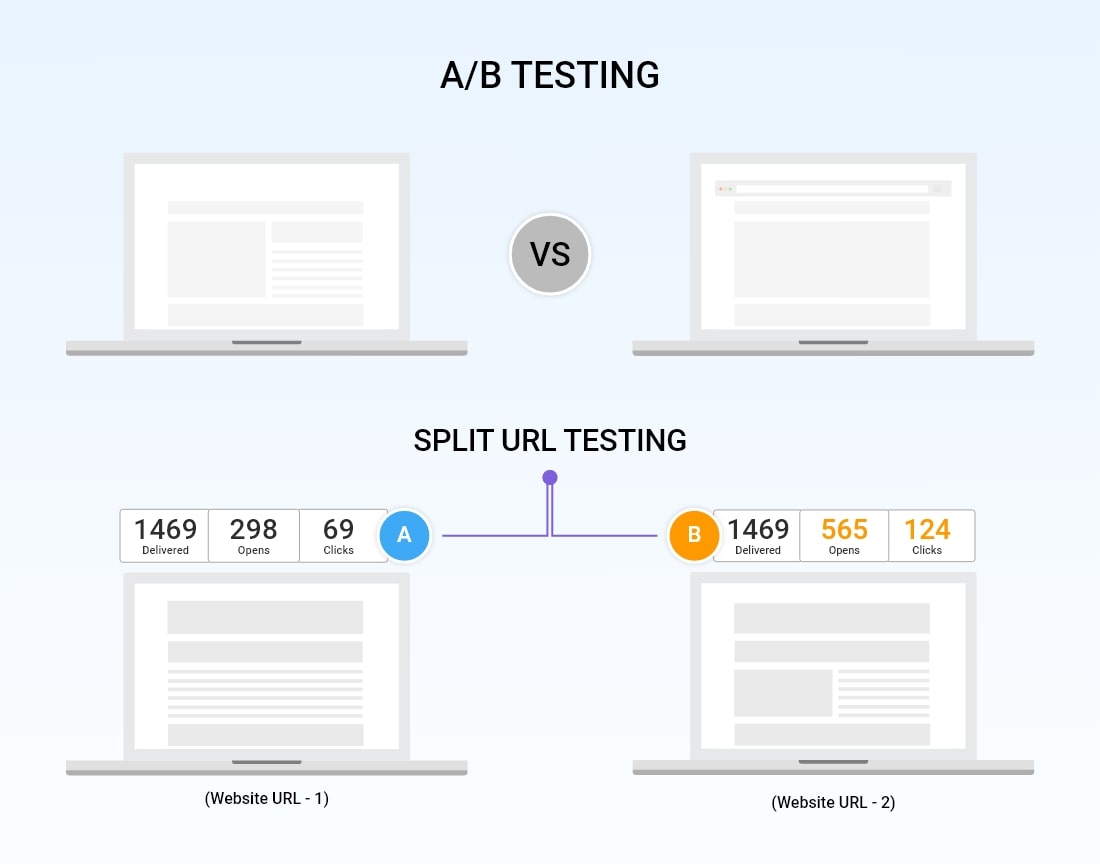

2. Split URL Testing or Redirection Testing

In split URL testing, each variation and the control page have its own URL. The traffic is split between the URLs to measure each page's conversion rate, and hence the name split URL test.

It is slightly different from A/B testing, where the control and variation are tested on the same URL.

It is important to note that in a split test, the design of variation and control can vary dramatically as long as the test goals (conversion rate) remain the same. It only indicates which overall design performs the best. However, in A/B testing, only minor changes are made to one element of the page.

Split testing is used to make changes at the page level, like implementing an entirely new page design.

3. Multi-page Testing

As the name suggests, multi-page testing allows you to test multiple pages simultaneously. You can compare the variation and control design on each page in a single test. It is similar to running an A/B test on several pages at the same time.

Suppose you want to test a new navigation bar design on your website. With the multi-page test, you can add the variation design to all the desired web pages and test them against the control group. The site visitors are funneled either towards the variation group or the control group. It ensures that they have a consistent experience throughout the website.

Multi-page testing also allows you to test design changes across multiple pages at once. You can create two sets of unique designs for your sales funnel, like product page, checkout page, and order confirmation page, to test this group against the control. Since the visitors would see only one of the two versions, you can easily compare how the two designs fare.

Do you want free survey software?

Qualaroo is the world’s most versatile survey tool

More Resources

The Beginner’s Guide to Conversion Rate Optimization (CRO) is an in-depth tutorial designed to help you convert more passive website visitors into active users that engage with your content or purchase your products.

With a 10-30% or higher response rate, every product owner should be asking their customers these questions.

Whether you are developing a new product or have been selling the same one for years, you need user feedback.