The harsh reality of A/B testing is that most tests fail to either improve upon the original design or lose outright. It can be tough to find a winning variation, which makes running repeated tests challenging. It’s easy to get demoralized. While failed tests are part of any A/B testing process, there are a few ways to improve your odds for successful tests.

1.) Use Qualitative and Quantitative Data to Inform Your A/B Tests

Don’t work with what you think will improve the performance of your website. Instead, look at your analytics data and combine it with qualitative insights from other sources such as surveys and session recordings to find the real issues getting in the way of conversions.

For example, you might think that your conversion rate will improve by changing the color of the CTA button, when in reality, your website visitors might be concerned by the lack of a visible return policy.

Wasting time on tests that are just guesses is a sure way to have more losing tests than winners. Instead, analyze user behavior and feedback to determine what to test.

2.) Establish the Set Statistical Significance

Aim for a higher confidence level. Give time to your test to yield results; don’t stop it too early. You need to run the tests long enough to increase your sample size and produce conclusive results.

The higher the sample size is for each variation, the more statistically significant the results are. Reaching the desired confidence level may take a few hours or a few weeks, depending upon the amount of traffic on your website.

3.) Test Different Variations of the Same Element Simultaneously

Suppose you intend to test more than one variation of the ‘control’ design. In that case, it is wise to test them simultaneously because it saves time and produces reliable results by subjecting them to similar testing conditions.

For example:

Let’s say you are testing the impact of two different CTA headlines. If you run the A/B test on one variation for a month and then test the other next month, it means it took you two months to find the winning variation.

Moreover, how would you know whether the results are not impacted by other factors present while testing one variation and absent during the other? These can be a running sale, upcoming festive season, sudden surge in traffic, etc.

Therefore, it is necessary to test the variations simultaneously.

4.) Collect Feedback From Users During the Test

Another essential step during the A/B test run is to collect user data and feedback to gather information for the next iteration. While the A/B test will help you choose the winning variation, you need to collect more information from the visitors to understand the reasons behind their choices.

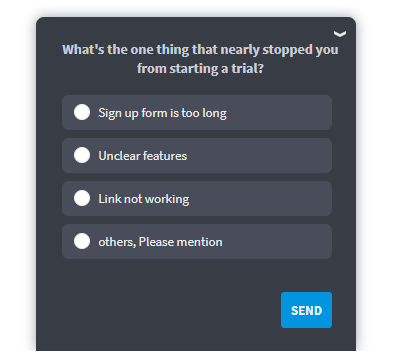

You can embed different surveys on the variation pages to collect real actionable insights. It will also help you understand the next step toward optimizing the webpage.

For example: let’s say changing the position of your CTA improved the number of clicks, but you see that people still are not converting. So you can use surveys during the test to start the next step of the optimization process to understand why people clicking on the CTAs don’t fill the form, subscribe to the newsletter, etc.

Bonus Read: 25 Best Online Survey Tools & Software

5.) Evaluate Potential A/B Tests by Impact, Confidence, and Ease

Not all A/B tests are created equal. If you are running an A/B test on a page that only gets a small fraction of your website traffic, no matter how well the test performs, the overall impact on your business will be minimal. However, finding a winning test on your high-valued pages can result in meaningful gains.

Evaluate each of your potential tests by three criteria: Impact, Confidence, and Ease.

- Impact: How likely is it that the test will have a meaningful impact on your business?

- Confidence: How confident is your team based on the data that this test will be effective?

- Ease: How easy is it to test this hypothesis? Is it fast, or will it take a lot of resources to execute?

When you rank all of your test ideas by these three criteria, you’ll be able to identify those that would have the most significant impact, the highest likelihood of success, and are easiest to test. Start with those that score highest–especially in all three areas.

6.) Learn From the Test Results

A/B testing is an iterative process. You create a hypothesis, run a test, collect the data, analyze it to find out what worked and what didn’t, and use it to run another test. There is always scope for further optimization.

If you find the winning variation on your A/B test, you might want to take a step further and test another element on the same page to see if it produces any meaningful impact.

Example

Let’s say that once you find the CTA button’s winning label, you can test the button’s position on the page to gauge further impact in CTR.

If your tests are statistically insignificant, then you know that the proposed variation wasn’t impactful, or you can run another test using the available data.

Make every test count. Use the results to learn from previous mistakes or explore new opportunities to improve conversion rates.

Watch: Understanding the Qualaroo Targeting section